Shifting focus from raw power to adaptive interfaces in the era of AI parity.

As we progress towards Artificial General Intelligence (AGI), the Large Language Models (LLMs) arms race intensifies. Companies compete across multiple dimensions: cost efficiency, training model size, compute power, speed, reasoning benchmarks, and context length, in efforts to create the best AI assistants. Unquestionably, advancement in these dimensions is what makes these products feel so magical, so fast in their processing, so vast in its knowledge, and so natural with its articulation. They only tell part of the story.

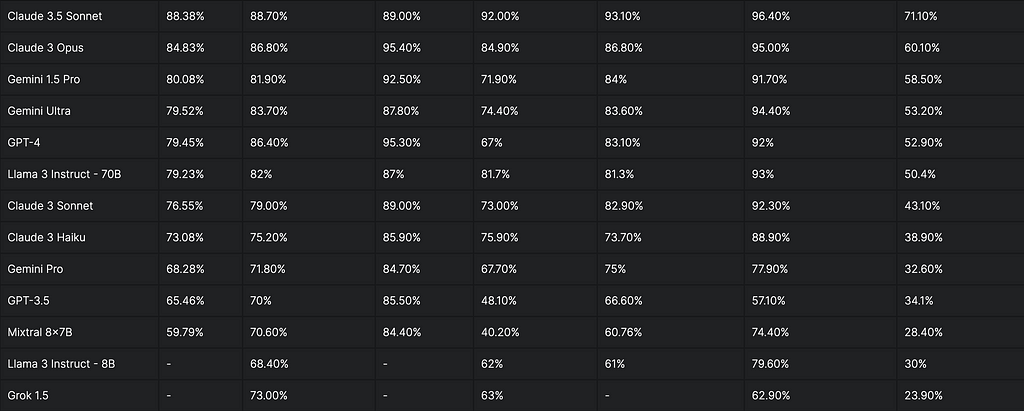

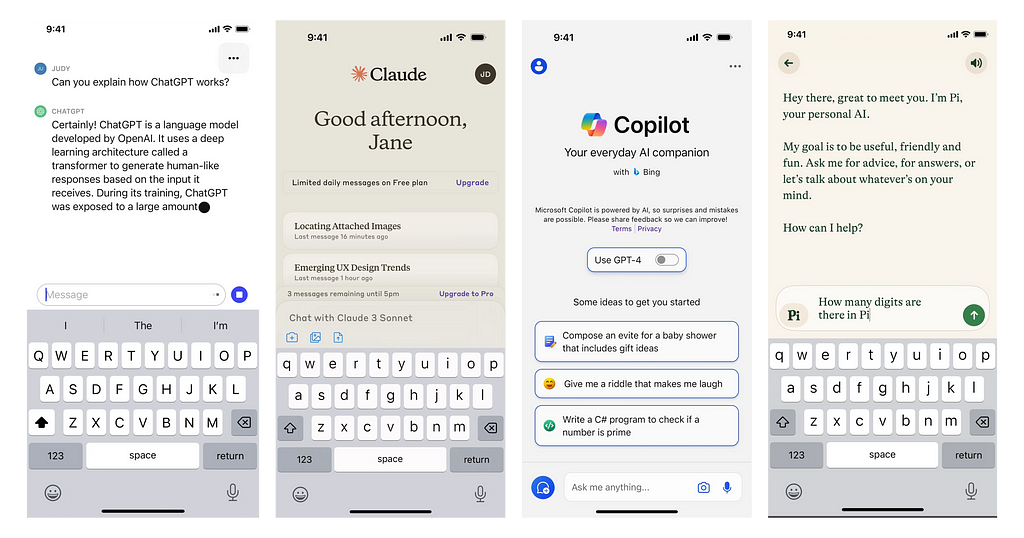

As LLMs become more computationally excellent and efficient, a critical shift is occurring in how we evaluate and differentiate them. In 2024, we have observed that the best LLM models are no longer leaps and bounds ahead of their competitors, and a computational edge does not necessarily translate to a superior product. The performance gap between leading models will only continue to narrow, with incremental improvements becoming less noticeable to the average user.

“I think if you look at the very top models, Claude and OpenAI and Mistral and Llama, the only people who I feel like really can tell the difference as users amongst those models are the people who study them. They’re getting pretty close.” — Ben Horowitz, The State of AI with Marc & Ben.

https://medium.com/media/8329d39a8c4f2f6309709088d4188938/href

Does it actually matter whether an AI is trained on 500 billion or 2 trillion parameters? To the average user, what is truly perceptible are the tangible effects of using an AI product and the results it produces. After all, it’s not necessarily the size of the model that drives its adoption and retention but rather the specific use cases it addresses, the relevant interaction modalities it offers, and the types of cognitive empowerment it provides.

This convergence signals that we are approaching a plateau of near-uniformity in raw performance, where further technical advancements may yield diminishing returns in terms of user-perceived value. At this juncture, the true differentiator emerges: the quality of user experience.

Intelligence is about focusing on what matters:

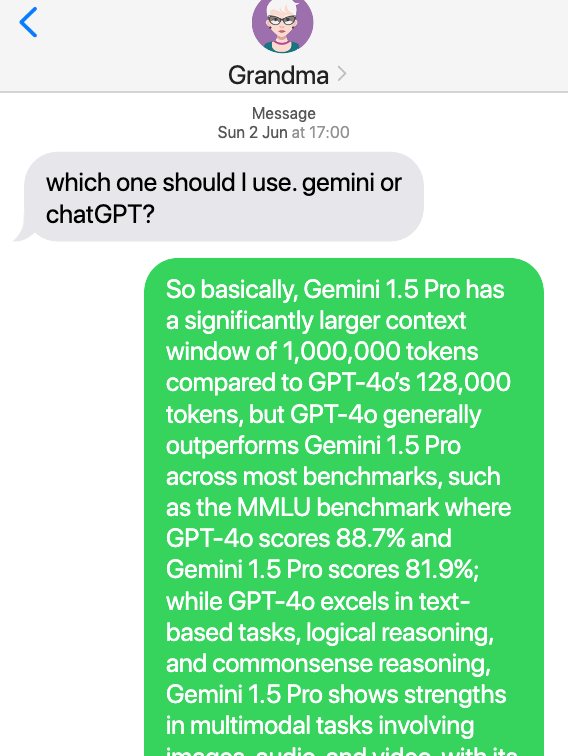

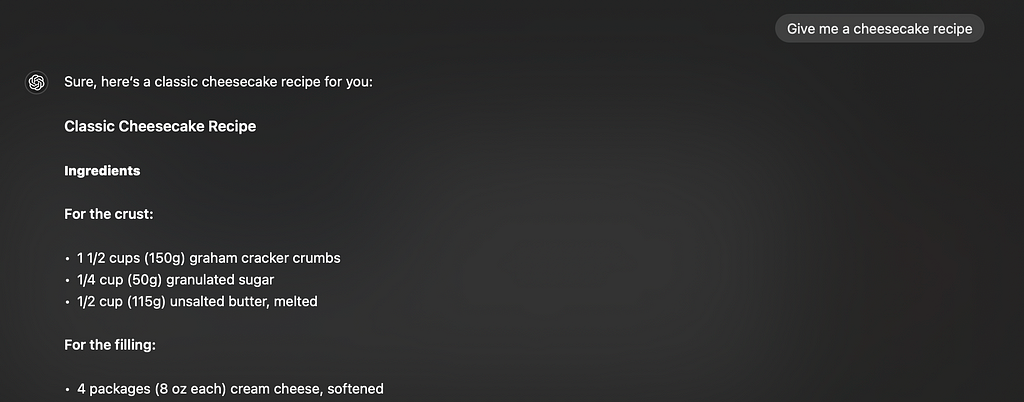

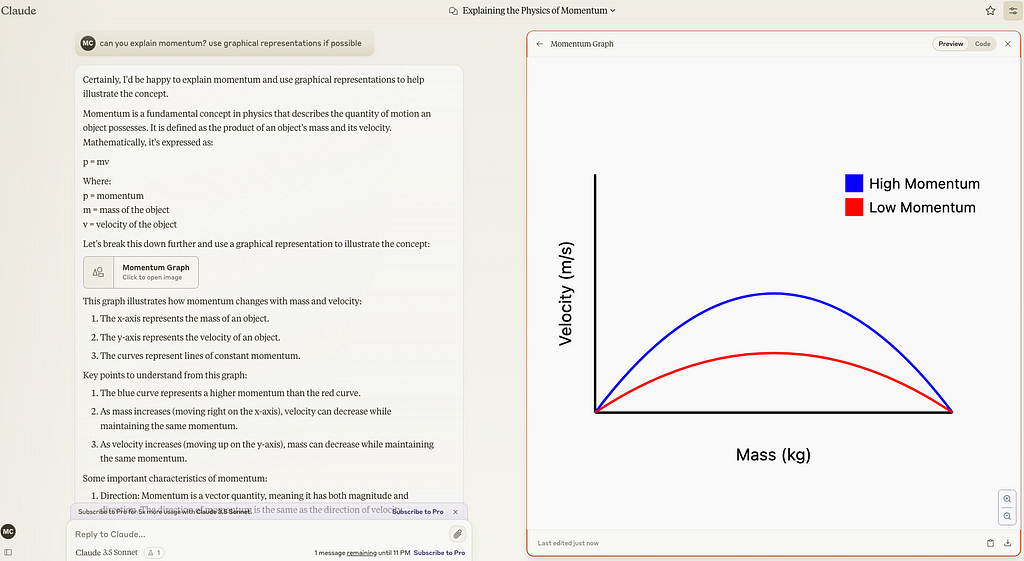

With the images above, let us consider which of the two responses given to ‘Grandma’, is more appropriate (assuming that Grandma is not a technical person, but at average user curious about AI).

Now consider if ‘Grandma’ was chatting to an AI assistant instead. The image on the left shows a response that is true, detailed and accurate, but…borderline tone-deaf. It makes the information dense, technical, opaque, inaccessible to the layman. It feels less intelligent, because it fails to adapt to the needs of the user, unable to conjure an alternative method to communicate effectively.

The image on the right shows a vastly oversimplified response that might not satisfy the technical mind. However, it is the response that ultimately works for ‘Grandma’, intelligently engaging her in a way that she is likely to understand. It does not seem right to tell ‘Grandma’ to adapt and prompt better. The superior AI assistant should morph dynamically to her needs. Why should grandma have to adapt to the LLM and not the other way around?

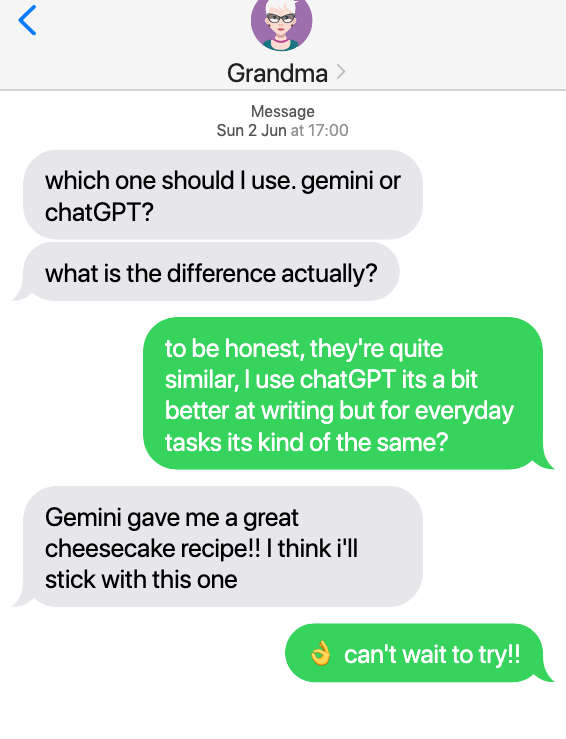

‘User centricity’ is more important than Prompt Engineering’

The current paradigm around interacting with LLMs is to tell users to get better at prompt engineering to optimize LLM responses, but one could imagine this demand to be short-sighted and short-lived. If your AI assistant cannot innovatively figure a workaround for an ambiguous prompt, then perhaps it is your product that is flawed. Successful AI products will need to innovate to shift the burden off the user, rather than blaming them for sub-par prompting.

The shift: from power to usability

The billion-dollar question transitions from ‘How powerful is the model?’ to ‘How effectively can users harness this power?’ We begin to direct our focus on innovating for human-AI interfaces.

- How smoothly can they integrate into, or change, our workflows?

- How significantly do they enhance our problem-solving capabilities and understanding of the world?

- How it can deliver what ‘truly matters’ to users.

This paradigm shift challenges us to look beyond the technical specifications and consider how these advanced models can be tailored to augment human capabilities in meaningful ways, by facilitating cognitive offloading, enhancing our mental capacities where it matters most. The next phase of LLM evolution will likely be defined not by incremental improvements in raw performance, but by revolutionary advancements in human-AI interfaces that properly leverage these powerful models.

The persistence of chat interfaces

The prevalence of chatbot and terminal-style interfaces for AI assistants represents a crucial starting point in human-AI interaction. They gained widespread adoption due to their familiarity and effectiveness, rooted in our collective understanding of conversational paradigms. AI assistants have so far gotten us to a good stage where it feels like we are texting an extremely intelligent friend, but they come with limitations.

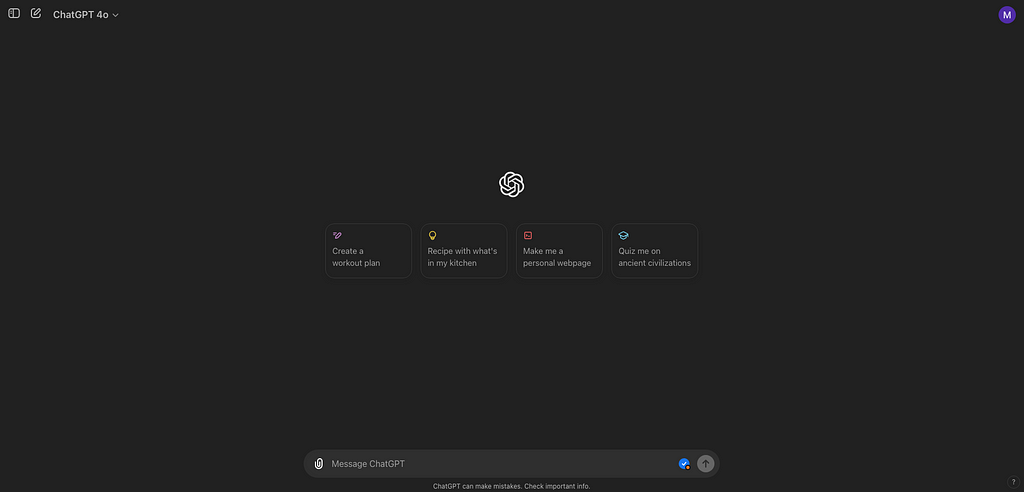

The “Blank Canvas” problem: Lack of affordances

Chat interfaces often present users with an empty text box, which can be intimidating and lead to uncertainty about how to begin or what the system is capable of. This is sometimes referred to as the “blank canvas” problem, where users face analysis paralysis when confronted with an open-ended input field.

Lack of affordances:

Unlike traditional GUIs, pure chat interfaces lack visual indicators of possible actions. In the absence of clear affordances, users may experience cognitive load as they attempt to guess the correct commands or inputs.

Misleading simplicity of natural langauge

The open-ended nature of natural language can lead to ambiguous inputs and mismatched expectations.

Not everyone is a ‘texter’

Some are less inclined to comprehension through plain text/numbers. This text aversion can hinder their ability to interact effectively through chat. An intelligent AI should meet the users where they are, rather than demand for better prompting or language ability.

“A lot of times, people don’t know what they want until you show it to them.”

— Steve Jobs

Augmented chat interfaces: improved guidance through UI guardrails and personalization.

To address the limitations of purely text-based AI interactions, tools like Perplexity.ai are incorporating more guidance and contextual UI elements. These augmented chat interfaces adapt to specific query types, demonstrating contextual awareness by offering counter-prompts and suggestions. This approach helps users frame their questions more effectively, ultimately improving the response quality. By integrating visual cues, structured input options, and dynamic UI elements alongside the chat interface, these tools create a more intuitive and guided user experience that bridges the gap between traditional GUIs and conversational AI.

Here’s a great quote:

“So the disruption comes from rethinking the whole UI itself. Why do we need links to be occupying the prominent real estate of the search engine UI(Referring to Google)? Flip that.”…

“The next generation model will be smarter. You can do these amazing things like planning, like query, breaking it down to pieces, collecting information, aggregating from sources, using different tools. Those kind of things you can do. You can keep answering harder and harder queries, but there’s still a lot of work to do on the product layer in terms of how the information is best presented to the user and how you think backwards from what the user really wanted and might want as a next step and give it to them before they even ask for it.

— Aaravind Srinivas, CEO of Perplexity.ai on the Lex Friedman Podcast

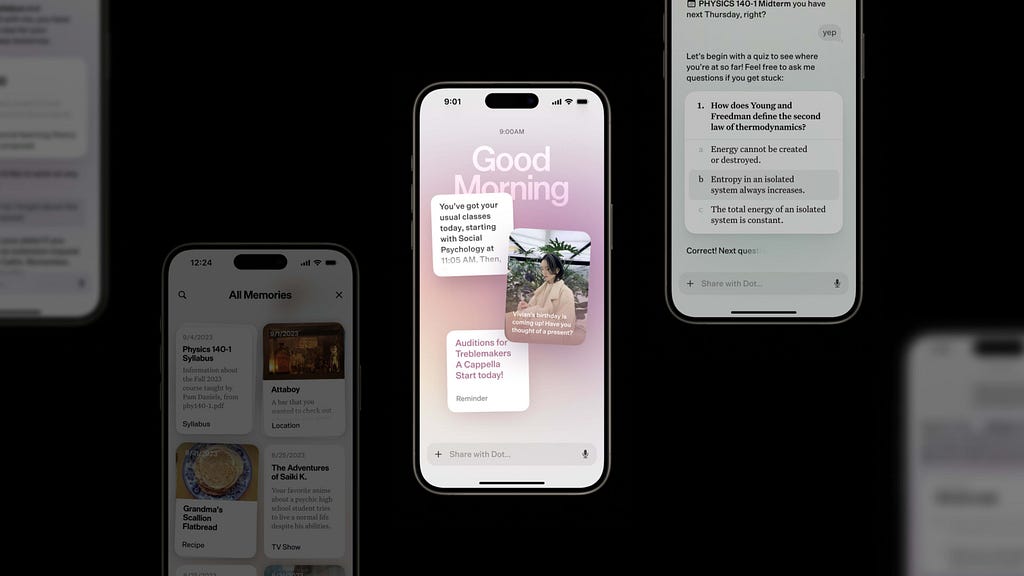

More examples: Apple Intelligence, iOS 18

In iOS 18 Apple Intelligence elevates Siri’s capabilities by integrating context awareness into the user experience. When asking Siri, “When does my mom’s flight land?”, the system intelligently parses through relevant information associated with your query, such as your contacts, calendar events, and recent communications. Siri then presents a comprehensive response. Anticipating that you might need this information quickly, it presents details like the airport terminal, departure time, and estimated arrival time, all wrapped up neatly and presented in an unintrusive and digestible interface. By leveraging the familiar iOS interface and enhancing it with AI-powered contextual understanding, Apple Intelligence transforms LLMs into highly applicable and practical tools, making complex queries feel much more natural and useful.

The future: multimodal interfaces:

The next phase of LLM evolution will likely be defined by advancements in how we interface with and leverage these powerful tools. Multimodal interactions will move beyond traditional chat interfaces, integrating voice, vision, gestures, and other sensory inputs to create complementary, concurrent streams of interaction. This opens the door for flexible interfaces that can adapt and react to various contexts, environments and specific behaviors.

https://medium.com/media/19f826ecd5c8ff7768609c8e0bda127f/hrefhttps://medium.com/media/b81042cc9d29549f9c51dfff06892f7a/href

Generative UI: The future of interface design

The emerging field of generative UI promises to revolutionize how we interact with AI systems. As multimodal models advance, they’re not just processing diverse inputs but learning to generate dynamic, context-appropriate interfaces on the fly.

This leap forward means AI will soon be able to spawn, summon, or manifest different forms of UI to adapt to various use cases and user needs. By understanding the physical interfaces and analogues we use daily, these systems will generate the most effective UI for any given task or explanation.

Imagine an AI that, instead of merely providing text and equations to explain a complex physics concept, generates an interactive mini-game where users can manipulate variables and see results in real-time. Or consider an AI assistant that, when helping with home renovation plans, conjures up a 3D augmented reality interface, allowing users to visualize and modify room layouts with gesture controls. These adaptive, intelligent interfaces will bridge the gap between AI’s vast capabilities and human cognitive preferences, making complex information more accessible and tasks more intuitive.

New generative UI tools & technologies in 2024

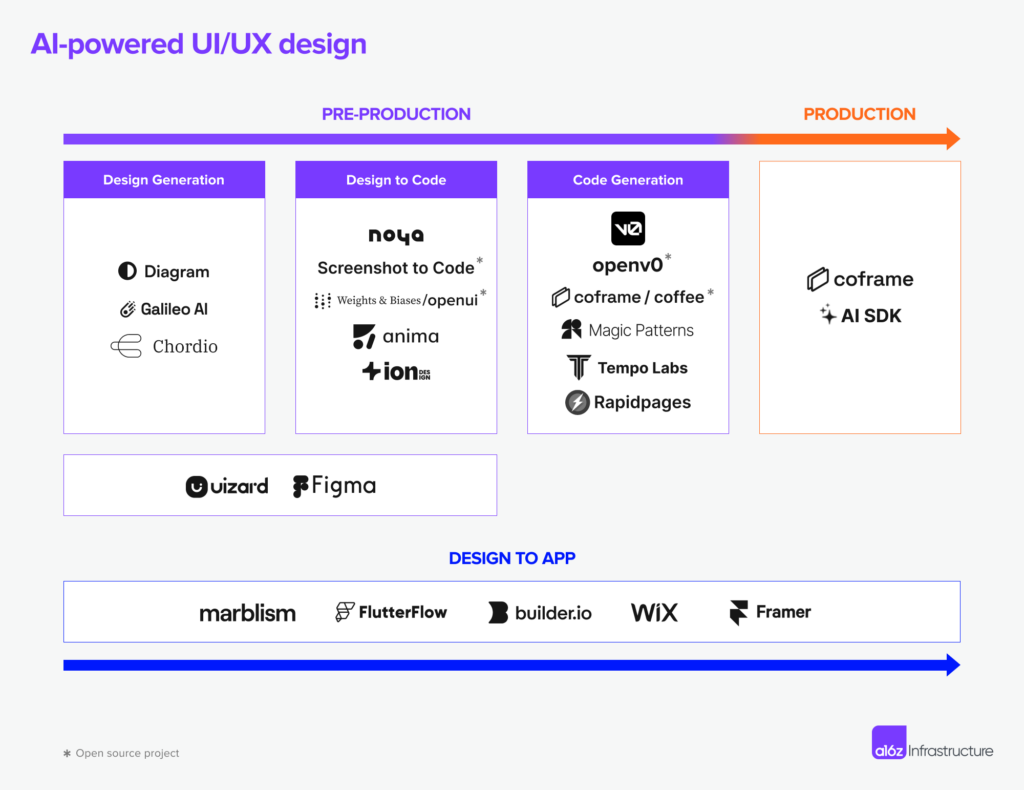

The current tools and tech stack, curated and identified by a16z, showcase end-to-end workflows that leverage machine learning and automation. These tools cover everything from pre-production design generation with Diagram(Now part of Figma AI), Galileo AI, and Chordio, to design-to-code conversion using Noya, Screenshot to Code, Anima, and Ion, followed by code generation through OpenV0, Magic Patterns, Tempo Labs, and Rapidpages, and culminating in production with Coframe, facilitating the seamless transitions from ideation to implementation. This is a glimpse into the future of generative UX/UI workflows, all powered by AI. One could foresee in the future, that these tools within the pipeline can cohesively integrate, collectively working towards the end goal of generating bespoke interfaces that scale.

To be continued…

We stand at the cusp of a new era in human-computer interaction, one that presents unparalleled opportunities for designers to redefine the very nature of our relationship with technology. Looking beyond the size and compute power of LLMs, designers and developers alike are redirecting their gaze to wards the interfaces of the future. In due time, interfaces and experiences of the future are not just responsive, but predictive and generative, molding itself to become the perfect cognitive prosthetic for each unique user and task.

Thanks for reading!

How UX can be a key differentiator for AI was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.

Leave a Reply