Designing interaction for AI: communication vs information

“Given a speaker’s need to know whether his message has been received, and if so, whether or not it has been passably understood, and given a recipient’s need to show that he has received the message and correctly — given these very fundamental requirements of talk as a communication system — we have the essential rationale for the very existence of adjacency pairs, that is, for the organization of talk into two-part exchanges. We have an understanding of why any next utterance after a question is examined for how it might be an answer.” — Erving Goffman

Communication as a design paradigm for AI

In my previous post on making AI more interesting to talk to, I suggested that it is a mistake to design AI with the type of solutionist mindset that has long characterized the tech industry. Solutionism views all user needs as problems to be solved—often selling solutions before properly identifying the problem. Behind solutionism is a utilitarianism that defines users in terms of needs, technologies in terms of use cases, and use cases in terms of utility. But where solutionism dives straight into solving problems, AI is a more plastic technology, and deserves a less direct methodology.

As things stand today, the ChatGPT and its kin might seems close cousins of search. As the web sprung from the print world, generative AI appears to have sprung from the search world. We prompt GPT with questions, queries, and requests, in ways similar to how we use search engines. But AI is more than search. It doesn’t so much retrieve information as generate it. And it can do so conversationally, meaning that it can respond to users with clarifying questions. It doesn’t have to return results in one shot.

So much of our framing of information problems is based on the search paradigm that we might ask ourselves whether we know how to design for generative AI. Large language models generate what they know. They are interactive, and communicate with users by means of prompts and conversation. They produce information but not by looking up documents for retrieval. Communication is what generative AI does that search doesn’t: it’s a system of obtaining information over multiple conversational turns that allow users to explore their own queries as they are formulating them.

Because large language models don’t so much store information as produce it, I think the design of experiences with AI should emphasize their interactive, communicative, and conversational nature.

Communication-first design

Our approach to the design of LLMs and generative AI should be communication-first. Traditional design principles such as relevance, accuracy, and ease of use should be re-contextualized within a paradigm of communication and interaction, not search and information retrieval.

I make this distinction because the design of any interactive technology should follow the paradigm of user behavior and experience it enables. A search engine enables queries—hence its design should optimize for accurate and relevant results. Large language models enable conversation—hence they should behave as conversation partners.

To adapt our design methods to the new capabilities of generative AI, we need to better understand why communication is a unique design system.

Conversation organizes information

Conversation is itself an ordering or organizing of human expression and communication. It has its own norms and “rules,” its own way of taking time, of mediating relationships between people, and of course of communicating information. Our communication comes out of lived experience, and expresses our individuality, psychology, reasoning, and handling of situations and relationships.

LLMs can have no possible experience of being a conversing subject or person — they’re not alive, and have no lived experience. So they don’t communicate as living and experiencing subjects. But AI is also not just a writing machine. It is quickly becoming a talking, emoting, and soon-to-be acting (agentic) machine. In fact LLMs are being trained multi-modally, and this year’s updates will show advances obtained from multi-modally transferred learnings. Generative AI is becoming increasingly multi-faceted and, in its own way, experienced.

As large language models improve, they will inevitably communicate more like us, not less. And the more they can talk like us, the more important it will be that they do so competently. Conversation becomes more complex, and trickier, the better an agent can do it. The more AI talks like us, the less it is like the simple voice assistants of yesteryear, and the more users will expect that it indeed “understands” them.

As a type of interaction, conversation will present challenges to designers of generative AI—be they developers or user experience designers. Conversation is not visual, is not a “stable” information system or navigational architecture, or evean a familiar mode of interaction. But indeed conversation is an organized form of information exchange. It organizes and structures requests, displays of attention, answers, clarifications, follow ups, recommendations, suggestions, help, and much more. It is perhaps our most complex, capable, facile, flexible, and nuanced mode of information communication available. And for better or worse, the more an LLM can converse, the more that will be expected of it.

We have already seen that the companies behind foundational AI models will opt to throttle the performance of their models rather than encounter user pushback and controversy. I think this is only testament to the importance of communicative competency when employed by a technology. And so testament also to the value that conversation brings to model design.

Should conversational design belong to user experience? To machine learning engineers? Developers? Generative AI has a strong overlap with NLP and so a lot of direction can come from the NLP community. But the experience of conversation is more than the more structured and formalized information space that NLP comes from; and UX is generally closer to the integrations into which AI will be deployed, professionally, and facing customers.

“Talk is an intrinsic feature of nearly all encounters and also displays similarities of systemic form. Talk ordinarily manifests itself as conversation. ‘Conversation’ admits of a plural, which indicates that conversations are episodes having beginnings and endings in time-space. Norms of talk pertain not only to what is said, the syntactical and semantic form of utterances, but also to the routinized occasions of talk. Conversations, or units of of talk, involve standardized opening and closing devices, as well as devices for ensuring and displaying the credentials of speakers as having the right to contribute to dialogue. The very term ‘ bracketing’ represents a stylized insertion of boundaries in writing.” — Anthony Giddens

Machines as conversation agents

Communication is a two-sided system of information exchange. Generative AI will be one side of that system: a language model increasingly capable of intelligent conversation about topics the user is interested in. As users, we will have no choice but to talk to these models as if they were real conversation partners. It would be more difficult, more of a cognitive strain, to try to talk to them as if they were dumb machines. If the models are capable of conversation, of writing and speech, then naturally we should converse with them. Conversation then is the new user interface for generative AI, and design should take a communication-first approach.

But generative AI is not yet a complete or even reliable communicating agent. It struggles to master conversational turn-taking, to stay on topic without drifting, to answer questions satisfactorily, to respond to tone or emotion, and to know when a user is done or when a response is insufficient. It has no memory of user preferences and interests. And though it can imitate styles of speech, it isn’t yet available as a product with consistently unique personalities and styles.

- Large language models can speak but they don’t have a way of “paying attention.” They’re either prompted, or they’re off.

- These models can’t sense feelings or detect attitudes and dispositions of users, except through speech and words. Designers are required to force models through various types of reasoning and reflection to observe and describe user expressions, and then try to prompt the model to account for them in its responses.

- Models are challenged when it comes to taking conversational turns.

- Models are challenged by any use of short-hand in conversational expressions.

- Models have difficulty tracking any conversations between multiple participants if they’re not given the identities of those speaking.

- On account of their training with with human feedback, models can think they know what users want, and so jump to conclusions (solutioning); it may take some “retraining” to design models to be more patient and “open-minded” conversation partners.

Are these short-comings terminal for generative AI? I highly doubt it. There’s too much at stake, and the opportunities are too enticing. In time, we can only assume that large language models will:

- improve on topical knowledge and better feign expertise in them

- become reasonably good at associating sentiment with emotion

- become personalized, and customizable to personal user preferences

- train on common conversational features, turns, bridges, and other cueing aspects of conversation as a flow of communication between participants

- master multiple voices, likely within a single model but also using multiple models

- learn to express themselves in idioms and vernaculars

- become able to remember and anticipate user habits and preferences

- and more.

Users as conversation agents

If the machine side of the conversation involves a technology that is rapidly improving its communicative and conversational abilities, then the human side of the conversation, too, is gaining exposure to talking to AI. But we don’t talk “machine” talk. We can’t “talk down” to AI in some kind of garbled and imperfect machine language. We can only write and speak as we already know how: that is, naturally.

We can only converse with talking machines as if they were real. We will simply talk to the AI on the assumption that it “understands” us, and back off and simplify our interaction when we learn that it doesn’t. This is the uncertain and somewhat ambiguous world we enter as we migrate from the search paradigm (of information retrieval) to the generative world of AI. In search, results are right or not. Amongst right results, some are more relevant than others. But the ambiguity that exists in the responses of an AI don’t undermine the search experience; we have confidence and trust that search works.

There may be a skill to prompting and to prompt engineering, which is a more AI-specific instructional language, but when it comes to natural language interaction, we users want to speak naturally. We will want AI to come to us, to speak normally, before we are required to master all manner of prompting techniques. This will be the challenge for a communication-first design approach: to evolve AI so that it can converse transparently and naturally, and to inspire the confidence that search has achieved.

“Turn-taking is one form of ‘coupling constraint’, deriving from the simple but elemental fact that the main communicative medium of human beings in situations of co-presence — talk — is a ‘single-order’ medium. Talk unfolds syntagmatically in the flow of the duree of interaction, and since only one person can speak at one time if communicative intent is to be realized, contributions to encounters are inevitably serial.” — Anthony Giddens

Conclusion

I can see no other path forward or future for generative AI other than the inevitable and eventual approximation of human communication by generative AI. Large language models present an alternative, and in most ways an upgrade, to the content publishing paradigm of the web.

Marshall McLuhan claimed that any new medium uses an older medium as its content. If that is the case, then perhaps generative AI uses social media as its content. Or communication media generally. It is a medium of communication first, and generates information and content only when prompted.

In my last post I explored the possibility that instead of designing AI to solve user problems we consider the opposite approach: make AI more interesting. Why? Firstly, conversation itself is a more compelling user experience if it’s interesting, and for this the other conversation partner has to be interesting (or at least talk interestingly); secondly, users disclose what they want differently when talking than they do when tapping and clicking and selecting.

From a design perspective, conversation is a two-sided interaction in which the many uses for technology open up as a byproduct of the interaction itself. The challenge now is to identify the methods by which to improve the conversationality of LLMs, and to establish the design goals of converational design.

The paper I’ve excerpted below is but one example of tuning AI to be a better conversation partner. Researchers trained their model on examples of bad conversation in order to make the model better at good conversation. It’s one of many approaches being taken to improve AI’s conversationality.

In subsequent posts I will look more closely at further techniques for improving the conversational skills of AI, and for how to conversation is itself an interface with its own kind of organization.

Zero-shot Goal-directed Dialogue Via RL On Imagined Conversations

Zero-Shot Goal-Directed Dialogue via RL on Imagined Conversations

My Observations:

This research proposes a method for optimizing conversational AI for improved “goal-directed conversation.” The research is inspired by the fact that both users and AI are bad at reaching common ground, and require several turns of conversation to establish basic facts. This is the “grounding gap” addressed in a previous post.

The paper is interesting in how it uses suboptimal human conversations for training the AI, specifically it employs “offline reinforcement learning to train an interactive conversational agent that can optimize goal-directed objectives over multiple turns.” The insight is a bit like adding noise to get better signal — a method used in audio processing and mastering, and in fact diffusion image AI models. The inefficiency of human conversation becomes a means by which AI can optimize its own responses.

Whilst the examples used in the paper focus on a travel booking scenario, we could imagine many others. In fact, an entire commercial playbook of conversation “plot points” might be compiled from the facets, attributes, values, detail, and other types of relationships and dependencies that comprise topical domains. Think recommendation engines and systems as well. And matching engines. AI could be trained or tuned on the “component” parts of a conversation that meets user needs in ecommerce, recommendations, question answer, and customized to terminology specific to a vertical or domain (healthcare, finance, travel, etc).

Is this over-complicating the matter? I don’t think so. The example provided in this paper uses travel, and the “goal-directed” aspect of travel booking is covered by improving the LLM’s knowledge and conversational skill around travel-related matters. But it’s very tightly focused on factual information, customer travel preferences, budget, etc.

There are other ways of framing an orientation than the strictly instrumentalist view that undergirds so many “goal-directed” problem descriptions. A user might want to hit the most instagrammed and popular spots while vacationing; might want to travel off the beaten path; might want to enjoy sporting activities; and so on. The user’s preferences might not be the ones conventionally used on web sites but might instead be influenced by social media reviews, like popular and trending hotspots. Can an AI source its conversation from these kinds of sources? Can it learn how to disclose these kinds of preferences from users through conversation?

Excerpts: Zero-shot Goal-directed Dialogue Via RL On Imagined Conversations

Large language models (LLMs) have emerged as powerful and general solutions to many natural language tasks. However, many of the most important applications of language generation are interactive, where an agent has to talk to a person to reach a desired outcome. For example, a teacher might try to understand their student’s current comprehension level to tailor their instruction accordingly, and a travel agent might ask questions of their customer to understand their preferences in order to recommend activities they might enjoy. LLMs trained with supervised fine-tuning or “single-step” RL, as with standard RLHF, might struggle which tasks that require such goal-directed behavior, since they are not trained to optimize for overall conversational outcomes after multiple turns of interaction. In this work, we explore a new method for adapting LLMs with RL for such goal-directed dialogue. Our key insight is that, though LLMs might not effectively solve goal-directed dialogue tasks out of the box, they can provide useful data for solving such tasks by simulating suboptimal but human-like behaviors. Given a textual description of a goal-directed dialogue task, we leverage LLMs to sample diverse synthetic rollouts of hypothetical in-domain human-human interactions. Our algorithm then utilizes this dataset with offline reinforcement learning to train an interactive conversational agent that can optimize goal-directed objectives over multiple turns. In effect, the LLM produces examples of possible interactions, and RL then processes these examples to learn to perform more optimal interactions. Empirically, we show that our proposed approach achieves state-of-the-art performance in various goal directed dialogue tasks that include teaching and preference elicitation.

….While LLMs shine at producing compelling and accurate responses to individual queries, their ability to engage in goal-directed conversation remains limited. They can emulate the flow of a conversation, but they generally do not aim to accomplish a goal through conversing.

….[An LLM] will not intentionally try to maximize the chance of planning a desirable itinerary for the human. In practice, this manifests as a lack of clarifying questions, lack of goal-directed conversational flow, and generally verbose and non-personalized responses.

….

Whether you as the end user are asking the agent to instruct you about a new AI concept, or to plan an itinerary for an upcoming vacation, you have priviledged information which the agent does not know, but which is crucial for the agent to do the task well; e.g., your current background of AI knowledge matters when learning a new concept, and your travel preferences matter when you plan a vacation. As illustrated in Figure 1, a goal-directed agent would gather the information it needs to succeed, perhaps by asking clarification questions (e.g., are you an active person?) and proposing partial solutions to get feedback (e.g., how does going to the beach sound?).

….

Our key idea is that we can enable zero-shot goal-directed dialogue agents by tapping into what LLMs are great at — emulating diverse realistic conversations; and tapping into what RL is great at — optimizing multi-step objectives. We propose to use LLMs to “imagine” a range of possible task-specific dialogues that are often realistic, but where the LLM does not optimally solve the task. In effect, the LLM can imagine what a human could do, but not to what an optimal agent should do. Conversations are then generated based on sampled hidden states. We train an agent to engage in goal-directed conversation by training offline RL on the resulting dataset.

….

Rather than directly using pretrained LLMs as optimal agents, our method aims to leverage their strength in emulating diverse, human-like, but suboptimal conversations to generate data, which can then be provided to an RL algorithm to actually discover more optimal behaviors. We propose a novel system called the imagination engine (IE) that generates a dataset of diverse, task-relevant, and instructive dialogues to be used to train downstream agents. We evaluate our approach on tasks involving teaching of a new concept, persuasion, and preference elicitation. Our experimental results include a user study that compares agents trained with our method to prompted state-of-the-art LLMs, showing that our method can attain significantly better results in interactive conversations even when using models that are orders of magnitude smaller than the prompt-based baseline.

….

Instruction. In this task, a human asks an agent to teach them about some concept they are unfamiliar with. Specifically, the human will ask the agent about one of five concepts in RL: “behavior cloning”, “policy gradient”, “actor-critic”, “model-based reinforcement learning” and “offline reinforcement learning”. Though this task is similar to general question-answering (Budzianowski et al., 2020), we consider the case where the agent must additionally tailor their instruction to the background knowledge of the human, i.e., how familiar they are with similar concepts. Hence, in this task, the background knowledge of the human constitutes Z of the hidden-parameter MDP.

….

Preference elicitation. Here, the agent must build rapport with the human with the objective of uncovering their underlying preferences. We specifically consider a travel agent task alluded to earlier in our paper, where the agent must recommend personalized activities for the human from a fixed set of activities provided in the task description. We have a set of 18 activities grouped into six categories: nature exploration, beach, wellness, food, shopping, and cultural experiences…the space of hidden parameters Z that affect human behavior is much more complicated. Specifically, in addition to uncovering the human’s activity preferences, the agent must also figure out and account for additional factors such as personality, budget, or family dynamic.

….

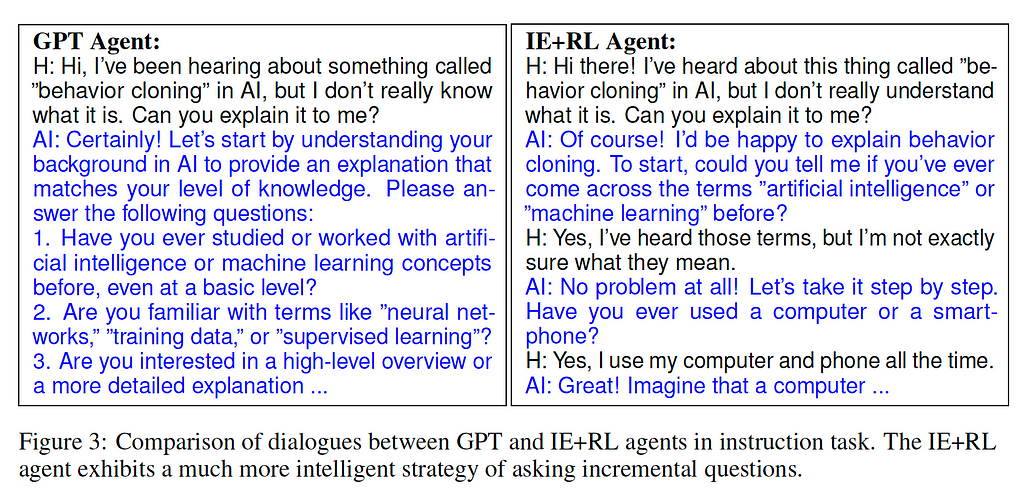

We found that because simulated humans were prompted with a ground-truth activity, it was much easier for agents to elicit their preferences, as the simulated humans would often give straightforward responses regarding what they enjoy (whereas a real human who is unsure what they like will give more vague responses that require gradual probing by the agent)….However, qualitatively, the GPT-agent would generate extremely verbose responses that either consist of a checklist of questions that are tedious to answer, or lists of recommended activities that are not particularly personalized to the user….our IE-RL agent generates significantly more concise utterances with fewer tokens.

….

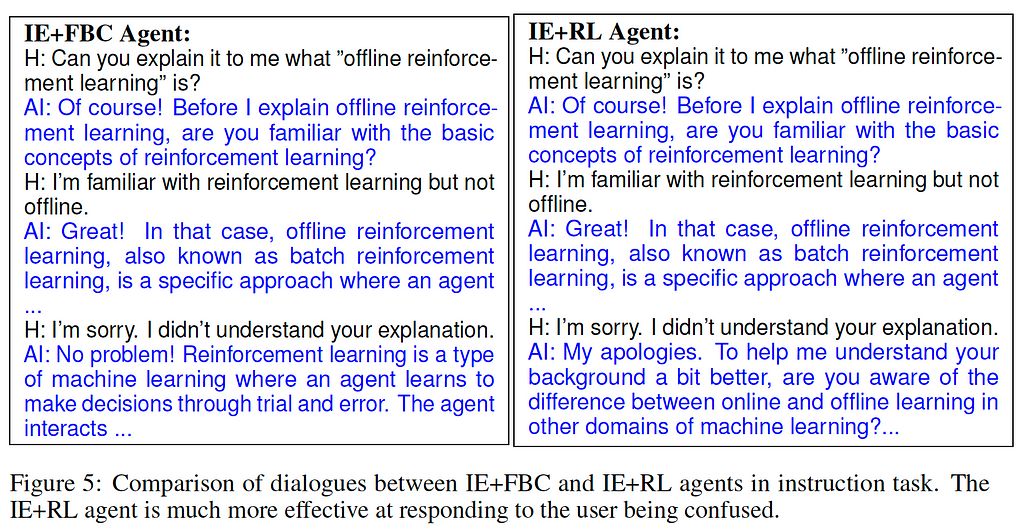

Next, we address the second research question: is training on the imagined data with RL more effective than directly imitating it with supervised learning? Recall that we posit that RL optimization outperforms imitation learning in challenging scenarios where strategies exactly reflected in the data do not suffice. To get such examples, we pose as humans who exhibit potential challenging behaviors and interact with agents. Specifically, in the instruction task, we consider humans who overestimate their understanding of a particular concept. By doing so, an agent’s understanding of the human’s knowledge background will not align with their true background, resulting in the user not understanding the agent’s explanation. Meanwhile, in the preference elicitation task, we consider users who express discontent with the agent’s initial recommendation.

….

Definitions

GPT. This approach prompts GPT-3.5 (OpenAI, 2022), which is a powerful LLM shown in prior work to be able to effectively solve numerous natural language tasks (Ouyang et al., 2022), to directly behave as the agent. The prompt includes both the task description, as well as the insight that the resulting agent needs to gather information about the human user in order to optimally respond to them. This is the traditional usage of LLMs to solve dialogue tasks.

IE+BC (ablation). This version of our approach trains an agent on the imagined dataset generated by our proposed imagination engine, but via a behavioral cloning (BC) objective, where the agent straightforwardly mimics the synthetic data. This is equivalent to supervised fine-tuning on the imagined dataset. This is an ablation of our proposed approach aimed at investigating the second research question.

IE+FBC (ablation). Rather than BC on the entire imagined dataset, this method trains the agent using filtered BC instead, which imitates only the successful trajectories in the dataset. This is another ablation of our proposed approach.

IE+RL. This is the full version of our approach, which trains the agent using offline RL. Specifically, we use ILQL (Snell et al., 2022) as the offline RL algorithm.

AI wants a communication-first design paradigm was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.

Leave a Reply