How to keep UX maturity when discussing something that you stand for, and not slam the door after another useless meeting.

Reaching “common ground” with conversational AI.

“Thus, as Adam Smith argued in his Theory of the Moral Sentiments, the individual must phrase his own concerns and feelings and interests in such a way as to make these maximally usable by the others as a source of appropriate involvement; and this major obligation of the individual qua interactant is balanced by his right to expect that other present will make some effort to stir up their sympathies and place them at his command. These two tendencies, that of the speaker to scale down his expressions and that of the listeners to scale up their interests, each in the light of the other’s capacities and demands, form the bridge that people build to one another, allowing them to meet for a moment of talk in a communion of reciprocally sustained involvement. It is this spark, not the more obvious kinds of love, that lights up the world.” — Erving Goffman

This post is inspired by an insight into human social interaction that explains a lot about the user experience of generative AI.

There is a monotonous and somewhat vanilla verbosity associated with how LLMs write and talk. I don’t think it’s because AI doesn’t use the right words. I think it’s because AI can’t show an interest in talking to us. The “spark” that Goffman refers to in the quote above is the sharing of a mutual interest in conversation and interaction. AI isn’t alive, or conscious, and so what interest it does show is just an artifact of language.

There has been a bit of research into this, but it’s focused on what’s called a “grounding gap” between LLMs and users. The research suggests that AI can be made more conversational by making it better at talking about the topics the user is interested in.

I want to explore whether this approach will make enough of a difference. The limit to LLMs as conversational agents is more than the information and domain expertise they can command and sustain during the course of a conversation.

It is the more fundamental fact that, unable to have a real relationship with users, AI will, at best, only be able to mimic or fake its way through conversation.

What I would like to explore is whether we (users) might find AI more interesting if it were developed to be more interesting itself. Take the opposite of the approach used in research, and instead of training the AI to better master user needs, train the AI to become more interesting as a synthetic personality, on the assumption that users might then find it a more compelling conversation partner. The assumption being that users will reveal more of their interests, questions, requests, and needs, if the AI engages them more effectively in conversation.

The “Presumptive Grounding” of LLMs

It might be an irony of RLHF (Reinforcement Learning from Human Feedback) that large language models are bad at conversation. Research (below) shows that use of human feedback might actually condition these generative AI models to assume they know what human users want and understand. Research has called these models “presumptive grounders.” Generative AI models are presumptive grounders because they don’t make an effort to use conversation to establish what users want.

Models assume common ground exists with the user because they are trained with human feedback to learn human points of view, and rewarded for answers to questions that are most acceptable to us.

The view from the sociology of interaction, however, shows us that common ground is reached during conversation, not before it. That common ground is not just an agreement about something between people, but a shared interest in each other’s orientation towards achieving common ground.

Common ground, to the sociologist of human social interaction, is not something done prior to conversation, but something done through conversation. And it is not a feature of language, but a feature of human relationships.

The question for the designer of generative AI, then, becomes: can the model’s “presumptive grounding” be overcome so that in conversation, the model behaves more like a normal person in conversation?

“The issue is not that the recipients should agree with what they have heard, but only agree with the speaker as to what they have heard.” — Erving Goffman

Conversation is UX of AI

In previous posts I have explored the implications of emotive AI for improved interactions with generative AI. And I have explored communication theory for its insights into conversation as a means of exchanging information through interaction and communication.

The user experience of generative AI offers the most to AI design not by improving what these models know, but how they present it. Generative AI may suffer somewhat from the solutionist mindset of the tech industry, coupled with a common assumption that AI eventually replaces search. That is, the purpose of LLMs is to answer questions and solve problems.

Where LLMs today are often used to answer a single question or prompt, a much larger horizon of opportunities exists for conversational AI. This would be the space of interaction between models and users; the space in which conversational use of language opens up topics and enables a co-creative domain of exploration. Models could still deliver their know-how, and serve up their knowledge, but through conversation that explores user interests, instead of presuming to know how to satisfy them right away.

We adopt the user experience orientation to re-frame this approach to generative AI in terms of communication, not information. Indeed much of the recent interest in generative AI is in training models on action — what’s called agentic AI. Communication is a type of action: social action. Conversation is a form of social action involving the use of words (action that is linguistically-mediated).

The papers cited below are just a few examples of the type of research being done on LLMs from a communication theoretical perspective. Research interest in conversation AI and the conversational capabilities of LLMs is not new. Natural Language Programming is a well-established field. But LLMs enhance and somewhat complicate the approaches of NLP, as LLMs generate “talk” without the programming and structure behind NLP (for NLP, think Alexa or Siri).

As we will see demonstrated here, the orientation taken up in research is towards solving problems, answering questions, and satisfying user needs and interests from the conventional (solutionist?) point of view. Research is interested in improving the ability of LLMs to quickly “grasp” and respond to user requests. Improvements to model training and fine-tuning focus on making conversation with LLMs tighter, more accurate, more friendly, and so on.

What if, instead of accelerating the model’s ability to provide solutions, we improve its ability to be interesting? What if, instead of teaching AI to model the user’s needs, we make teach the model to make itself more interesting to talk to?

“What, then, is talk viewed interactionally? It is an example of that arrangement by which individuals come together and sustain matters having a ratified, joint, current, and running claim upon attention, a claim which lodges them together on some sort of intersubjective, mental world.” — Erving Goffman

Reaching “Common Ground” through conversation

Perhaps part of the friction in adoption of generative AI is that it is too quick to presume, too oriented towards solutioning, and too comprehensive in its responses to be interesting to engage with. Perhaps some of the improvements we should make to generative AI would be to tune the AI to help prompt the user, rather than leaping to conclusions.

An LLM that is an interesting and compelling conversation partner might expose and open up user information through conversation and engagement better than one designed to solve problems directly and immediately.

Generative AI development is aimed at improving model abilities to solve user needs. The problem of “presumptive grounding,” and the related “grounding gap” between user and LLM, concerns a misalignment between the AI and the user as actors or agents. They do not have common ground. The model doesn’t automatically “grasp” the user problem from the prompt provided well enough to resolve it, though it responds as if it can.

In communication between people, “common ground” is a shared understanding achieved by participants about what is being said or talked about. But to have common ground is not only to be on the same page about what is being said, but with it as well. Think of the phrase: “let’s get on the same page.” Common ground isn’t just understanding the words being spoken but getting aligned towards having a conversation.

Can an LLM fake this part? Generative AI can produce the words that make it able to talk about something the user needs. But can it make an interesting conversation partner? Can it give the user the feeling that it’s interested in solving the user’s problem, or that it cares?

If a user prompts an LLM for help with something, and gets a full paragraph explanation in return, this might be both more and less than the user wanted or needed. Many user needs require a follow-up or clarification. The model would want to know what to ask, and how to ask it. The issue seems to be that models are trained, with human feedback, to solve faster and without clarification and follow up.

As we know from UX design, however, in many cases of internet search, question and answer, recommendations, and related information- and help-centric interactions, users need help selecting the most appropriate solution to a problem. Some need help framing and describing the problem. Some need help evaluating the factors involved in distinguishing different types or degrees of solution. All of these are contextually clarified with follow questions and exploration.

The situations that apply to online search apply to interaction with LLMs, and might help explain the slow adoption of generative AI. A user might not be confident about how to phrase the question or prompt. The user might be uncertain of how to interpret the response given by the LLM. The user might be needing help, not necessarily an answer.

The assumption I’m making is that users understand that AI is synthetic, and so might be quick to exit an interaction with it. But if AI were more a more interesting conversation partner, were a more interesting “entity,” might it go a step further to solving user needs and requests by means of engaging interaction? The question would be whether the AI can be trained not on solving topical, domain expert facts and details; but whether it can be trained to sustain interaction such that the user reveals and discloses his or her interests, and what will satisfy them.

“Given a speaker’s need to know whether his message has been received, and if so, whether or not it has been passably understood, and given a recipient’s need to show that he has received the message and correctly — given these very fundamental requirements of talk as a communication system — we have the essential rationale for the very existence of adjacency pairs, that is, for the organization of talk into two-part exchanges. We have an understanding of why any next utterance after a question is examined for how it might be an answer.” — Erving Goffman

Common ground through shared interest

The LLM doesn’t have an interest in the topic in the way the user does. The LLM has no need, no purpose, no use case; the user does. I think we can characterize this lack of common ground as an interest problem, not as an information problem. Can AI be designed to show a common interest in talking about a problem with us?

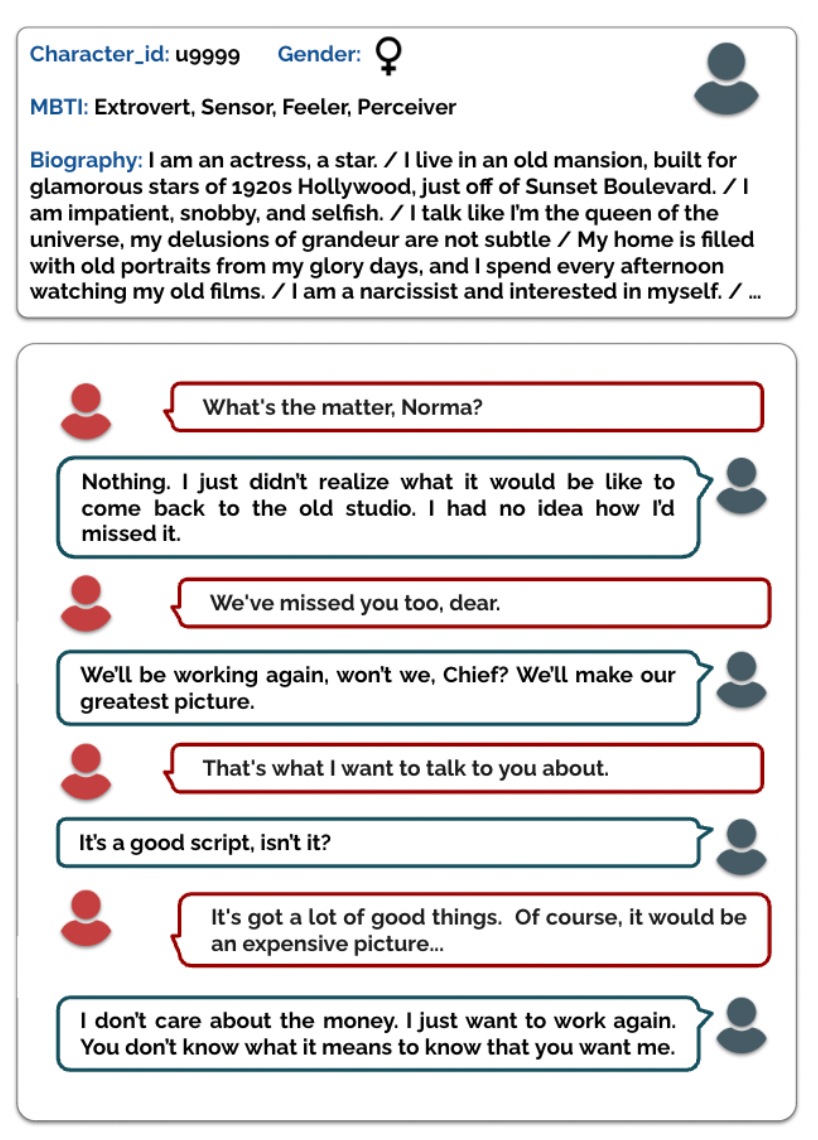

One of the papers below has the model fine-tune on personas (they’re not UX-style personas, but playing card personas). By fine-tuning on personas the model improves its ability to ask questions that are persona-specific: likes, pastimes, gender, age, location, etc. The model self-generates questions related to personas and then trains on the match between questions and personas. Tuned on how to ask better questions, the model shows better conversational responses.

Questions about the validity of self-generated questions and the use of pre-packaged and short personas notwithstanding, the goal of this research was to orient the model to faster and more precise conversational solutioning.

What if one were to take the opposite approach? What if the model were used to train on its own interests? What if the model had a set of internal personas, and were trained to generate interesting conversation about what’s in those personas? What if the model were trained to be a more interesting conversation partner rather than a more knowledgeable solution provider? Would such a model better reveal the user’s needs and interests, through more engaging dialog and better follow up questions?

“…there is the obvious but insufficiently appreciated fact that words we speak are often not our own, at least our current “own.” …. Uttered words have utterers; utterances, however, have subjects (implied or explicit), and although these may designate the utterer, there is nothing in the syntax of utterances to require this coincidence.” — Erving Goffman

Perhaps we are too stuck on our mindset to acknowledge that some of what holds adoption of AI back is stylistic. Generative AI is being engineered to always better answer and solve for user prompts. We may be missing the magic that a good and compelling interaction can offer: the opportunity to use engagement to disclose user needs and interests. Conversation is itself the very mechanism by which we do this ourselves. Is this not a user experience opportunity for AI?

In fact AI doesn’t need to pretend to be human. It doesn’t need to always better simulate, mimic, and copy human speech, writing, and behavior. There might be something to developing an AI to have its own personality, its own conversational styles, and its own type of engagement. It would be a success if users were to show an interest in talking to an AI because it was an interesting experience. But for this, LLMs will have to be better at sustaining conversation.

The white papers excerpted here examine the value of common ground in conversational AI, explore reasons for the grounding gap between AI and users, and theorize about this gap being due to the human feedback used in training models. The assumption here would be that the use of human feedback orients the model towards its “knowledge” in such a way that it assumes it is aligned with human user interests. In the case of the STaR-GATE paper, the model aligns its answer with a stereotype of the user by asking questions to expose what kind of persona the user compares to.

“After two iterations of self-improvement, the Questioner asks better questions, allowing it to generate responses that are preferred over responses from the initial model on 72% of tasks. Our results indicate that teaching a language model to ask better questions leads to better personalized responses.” — STaR-GATE: Teaching Language Models to Ask Clarifying Questions

I believe it would be interesting to design LLMs for question answer cases in which the model has a nuanced, interesting, enjoyable, and agile way of showing an interest in what the user is querying. Rather than quickly drill down into the user’s query, the model might instead tease it open; and by presenting itself as being interested, the user might reveal more relevant information without having to be prompted directly.

Whilst I have no specific research effort in mind, some kind of internal dialog and conversational fine-tuning that is centered not on personas and user attributes but on conversational demonstrations of interest would be illuminating. By differentiating the model internally, in essence by allowing it to have multiple interests and interested selves, a model might then be trained to express or phrase its questions in a more engaging and compelling fashion. How would a model talk if it were designed to open up the user, rather than solve a problem?

Affective Conversational Agents: Understanding Expectations and Personal Influences

Affective Conversational Agents: Understanding Expectations and Personal Influences

These models can operate as chatbots that can be customized to take different personas and skills, such as having the ability to recognize emotions associated with different perspectives, adjust their responses accordingly, and generate their own simulated states, enabling the development of more humanlike and emotionally intelligent AI systems when appropriate.

Existing literature highlights simulated affective empathy or emotional empathy as one important factor in these human-agent interactions [12]–[14]. Affective empathy, as one of the core areas of the field of Affective Computing [15], is one component of general empathy, which involves emotions as well as the capacity to share and understand another’s state of mind, with continuous exchanges between emotion and intention in dialogue [16], [17]. Incorporating capabilities that embody affective empathy into systems has been shown to impact user satisfaction, trust, and acceptance of AI systems, as well as facilitate more effective communication and collaboration between humans and AI agents [18], [19]. As conversational AI agents are further adopted across various application domains, it becomes crucial to understand the potential role and expectations of affective empathy in designing and building AI conversational agents with simulated affective empathy.

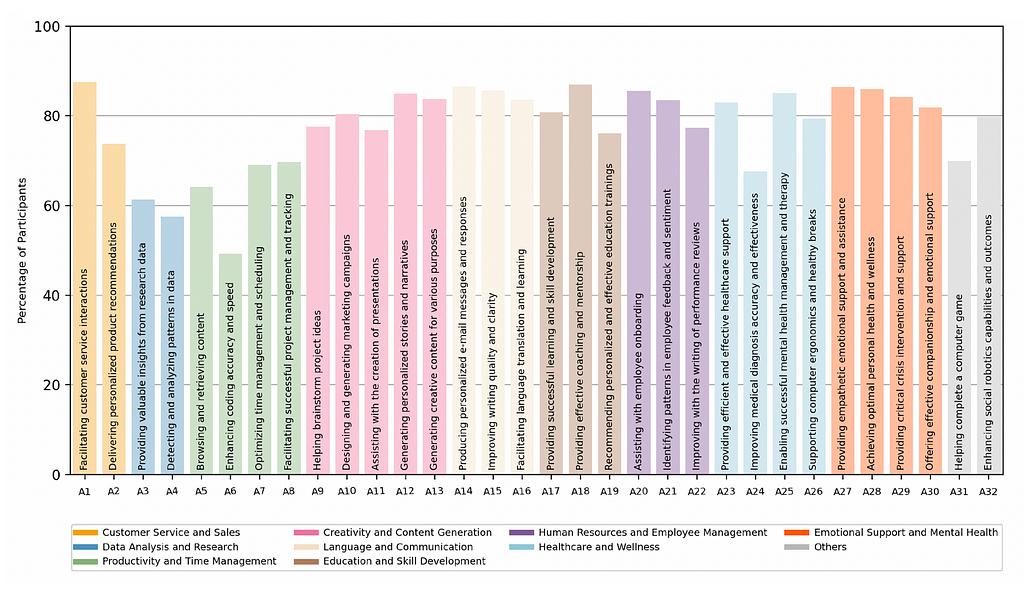

- RQ1 What are the specific preferences of individuals regarding the manifestation of affective AI conversational agents across a diverse range of applications, and how do these preferences differ based on the context and nature of the application?

- RQ2 What human factors such as personality traits and emotional regulation skills contribute to the variation in personal preferences for affective AI agents, and how do these factors influence the acceptance of affective qualities?

We found that there is a wide range of empathy preferences depending on the application, with higher affective expectations for tasks that involve human interaction, emotional support, and creative or content generation tasks. In particular, assisting in the writing of personalized e-mails and messages (A14), facilitating customer service interactions (A1), and providing effective coaching and mentorship (A18) received the highest ratings. Moreover, we identified preferences in terms of platforms, sharing of sensing modalities, and potential channels of communication in scenarios where affective empathy may be helpful. Understanding these preferences can help researchers and practitioners identify areas of opportunity and prioritize potential research and development efforts, ultimately leading to more effective and engaging AI conversational agents.

Grounding or Guesswork? Large Language Models are Presumptive Grounders

Grounding or Guesswork? Large Language Models are Presumptive Grounders

Effective conversation requires common ground: a shared understanding between the participants. Common ground, however, does not emerge spontaneously in conversation. Speakers and listeners work together to both identify and construct a shared basis while avoiding misunderstanding. To accomplish grounding, humans rely on a range of dialogue acts, like clarification (What do you mean?) and acknowledgment (I understand.). In domains like teaching and emotional support, carefully constructing grounding prevents misunderstanding. However, it is unclear whether large language models (LLMs) leverage these dialogue acts in constructing common ground…. We find that current LLMs are presumptive grounders, biased towards assuming common ground without using grounding acts.

….

From an NLP perspective, establishing and leveraging common ground is a complex challenge: dialogue agents must recognize and utilize implicit aspects of human communication. Recent chat-based large language models (LLMs), however, are designed explicitly for following instructions….. We hypothesize that this instruction following paradigm — a combination of supervised fine-tuning (SFT) and reinforcement learning with human feedback (RLHF) on instruction data — might lead them to avoid conversational grounding.

….

Building on prior work in dialogue and conversational analysis, we curate a collection of dialogue acts used to construct common ground (§2). Then, we select datasets & domains to study human-LM grounding. We focus on settings where human-human grounding is critical, and where LLMs have been applied: namely, emotional support, persuasion, and teaching (§3).

After curating a set of grounding acts, we build prompted few-shot classifiers to detect them (§4). We then use LLMs to simulate taking turns in our human-human dialogue datasets and compare alignment between human and GPT-generated grounding strategies (§5). Given the same conversational context, we find a grounding gap: off-the-shelf LLMs are, on average, 77.5% less likely to use grounding acts than humans (§6).

…we explore a range of possible interventions, from ablating training iterations on instruction following data (SFT and RLHF) to designing a simple prompting mitigation (§7). We find that SFT does not improve conversational grounding, and RLHF erodes it.

STaR-GATE: Teaching Language Models to Ask Clarifying Questions

STaR-GATE: Teaching Language Models to Ask Clarifying Questions

When prompting language models to complete a task, users often leave important aspects unsaid. While asking questions could resolve this ambiguity (GATE; Li et al., 2023), models often struggle to ask good questions. We explore a language model’s ability to self-improve (STaR; Zelikman et al., 2022) by rewarding the model for generating useful questions — a simple method we dub STaR-GATE. We generate a synthetic dataset of 25,500 unique persona-task prompts to simulate conversations between a pre-trained language model — the Questioner — and a Roleplayer whose preferences are unknown to the Questioner. By asking questions, the Questioner elicits preferences from the Roleplayer. The Questioner is iteratively fine-tuned on questions that increase the probability of high-quality responses to the task, which are generated by an Oracle with access to the Roleplayer’s latent preferences. After two iterations of self-improvement, the Questioner asks better questions, allowing it to generate responses that are preferred over responses from the initial model on 72% of tasks.

One approach to resolving task ambiguity is by asking targeted questions to elicit relevant information from users…. However, this approach is inflexible in guiding a model’s questioning strategy and frequently generates queries that are ineffective or irrelevant for the task at hand. Indeed, it is likely that current alignment strategies — such as RLHF — specifically inhibit the ability to carry out such dialog (Shaikh et al., 2023).

….

(1)We define a task setting for improving elicitation for which we generate a synthetic dataset of 25,500 unique persona-task prompts; (2)We define a reward function based on the log probability of gold responses generated by an oracle model (with access to the persona); and (3) We encourage the LM to use the elicited information while avoiding distribution shift through response regularization.

….

In GATE (short for Generative Active Task Elicitation), a LM elicits and infers intended behavior through free-form, language-based interaction. Unlike non-interactive elicitation approaches, such as prompting (Brown et al., 2020), which rely entirely on the user to specify their preferences, generative elicitation probes nuanced user preferences better.

….

We are interested in training a LM to better elicit preferences using its own reasoning capabilities. To do so, we draw upon recent work showing that LMs can self-improve. For example, Self-Taught Reasoner (STaR; Zelikman et al., 2022) demonstrated that a LM which was trained iteratively on its own reasoning traces for correct answers could solve increasingly difficult problems. By combining rationalization (i.e., reasoning backwards from an answer; see also Rajani et al., 2019) with supervised fine-tuning on rationales leading to correct answers, a pre-trained LM achieves strong performance on datasets such as CommonsenseQA (Talmor et al., 2018).

….

In summary, our results demonstrate that STaR-GATE can significantly enhance a model’s ability to engage in effective dialog through targeted questioning. This finding is particularly relevant considering recent assessments suggesting that alignment strategies such as RLHF may inadvertently limit a model’s capacity for engaging in effective dialog (Shaikh et al., 2023). Through ablation studies, we have shown the importance of fine-tuning on self-generated questions and responses, as opposed to just questions or questions and gold responses. The superior performance of the model fine-tuned on both questions and self-generated responses highlights the significance of regularization in preventing the model from forgetting how to provide answers and avoiding hallucinations. Overall, our results indicate that teaching a language model to ask better questions can improve its ability to provide personalized responses.

Personas: https://github.com/LanD-FBK/prodigy-dataset

What if LLMs were actually interesting to talk to? was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.

Leave a Reply