I created a content design GPT to find out — here’s what I learned.

Content design is a growing specialism, especially in the world of government, education and health. I manage four content designers for Suffolk County Council, and our primary focus is to make local government information and services simpler, clearer and more accessible.

As a digitally-minded team, we’re always interested in new technologies. Naturally, then, generative artificial intelligence (AI) tools like ChatGPT, Claude and Jasper have prompted a lot of discussion about how we could use them.

But the emergence of custom GPTs got me wondering: do we actually need human content designers at all? Can I replace my whole team with just me feeding a GPT input and publishing the output? I decided to put my theory to the test: I created a content design GPT to see how it handled rewriting local government information.

For the purposes of this experiment, I ignored content designer activities like UI design (wireframing and prototyping) and UX research (surveys, focus groups, usability testing). I focused on the bread and butter: writing and editing content.

The Method

Step 1: Creating a GPT

First, I created my content design GPT (using ChatGPT-4, which requires a paid subscription). I named my creation ‘Contentify’, for no other reason than it sounds cool. I also gave it a funky profile/logo image (Generated by DALL-E, obviously).

The Description and Instructions for the GPT give a high-level summary of what it’s designed to do. For extra depth, I also uploaded a summary of our content guidelines as additional Knowledge within the GPT configuration.

Step 2: Finding content to improve

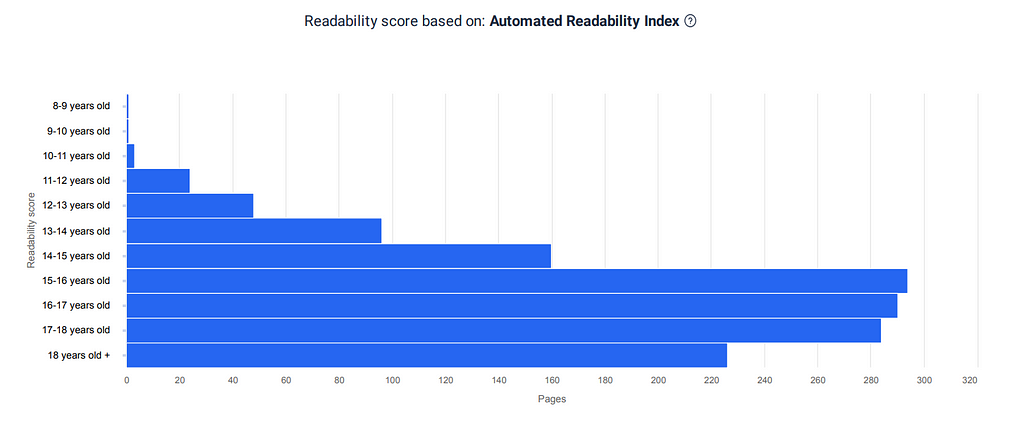

Next, I logged into Siteimprove, which our content design team uses to monitor quality assurance (QA) issues across our websites. Primarily, we use Siteimprove for checking broken links, misspellings and accessibility issues. But it also assesses the readability of your web content.

Despite aiming for a reading age of around 10, sadly most of our webpages score much higher. In fact, Siteimprove told me that 226 of our pages had a read age of 18-plus. I decided these pages — some of which require a post-graduate reading level — would be the focus of my experiment.

Step 3: Generating output

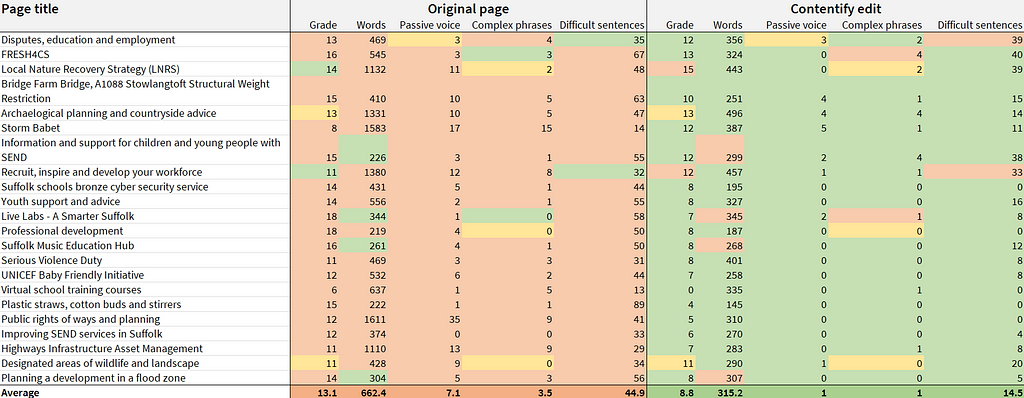

I randomly selected 22 pages of the 226 worst offenders on the Siteimprove readability report— a sample size of approximately 10% of our least readable pages.

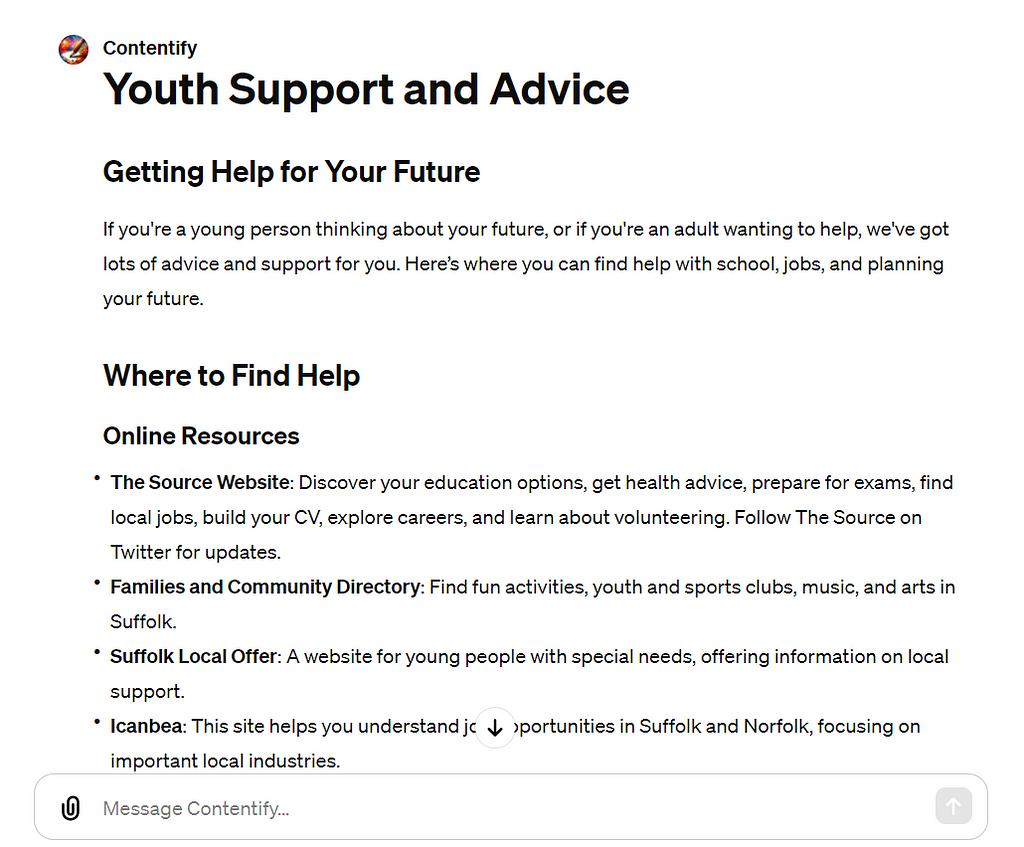

I then started feeding these pages to Contentify. I literally just pasted the content from the webpage and pressed enter, as I’d already configured the GPT to know what to do with any content submitted. As soon as I’d pressed enter, Contentify started re-writing. Even the longest pages with thousands of characters took less than a minute to generate a new edit.

Step 4: Content comparison

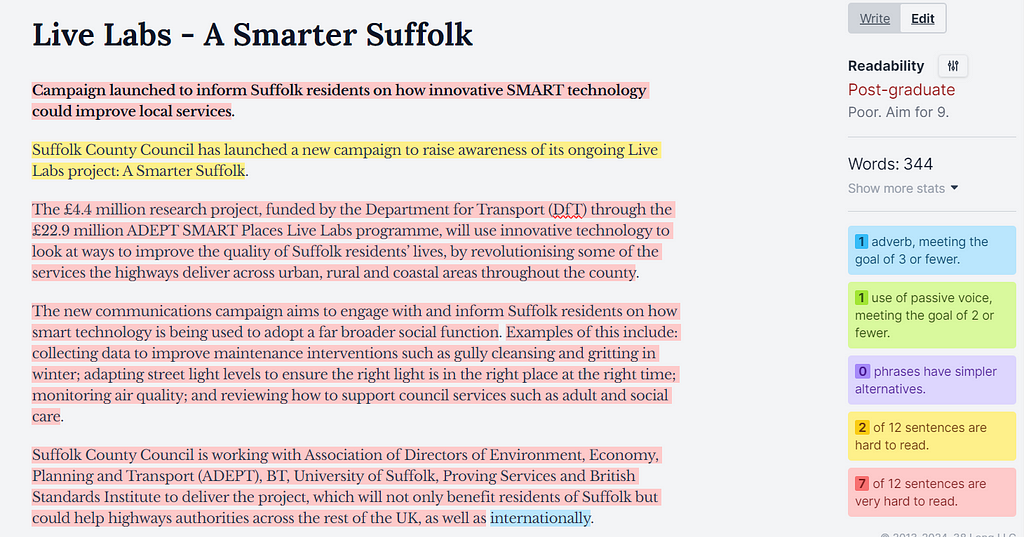

For the next stage, I opened two new browser tabs tabs and loaded up Hemingway Editor, a free writing productivity tool. In one tab, I pasted the original webpage content, and in the other, I pasted the GPT edit (repeating this 22 times).

Hemingway evaluates writing on a few core metrics including:

- overall readability (using a grading system)

- use of adverbs

- instances of passive voice

- phrases that have simpler alternatives

- sentences that are either hard or very hard to read

Like Siteimprove, Hemingway did not like many of our original webpages. At all.

Step 5: The analysis

Finally, I compared the data from Hemingway for all original and GPT-edited content in Microsoft Excel. The five metrics I focused on — which in all cases ‘the lower the better’ principle applies — were:

- Grade (readability)

- Wordcount

- Passive voice instances

- Complex phrase instances (with simpler alternatives)

- Percentage of sentences that were very hard to read

To make it easier to digest, I colour-coded the results, with green meaning better, red meaning worse and yellow meaning the same.

The Results

Ok, so now the dust has settled, what did I actually learn?

First, let’s look at the positives and negatives of how Contentify performed.

What the GPT did well

- The GPT improved readability for almost every page (reducing the reading age required to understand the information). Contentify drastically reduced instances of passive/complex language, as well as the ratio of sentences that were very hard to read.

- The GPT also used lots of headings, bullet points and bold text to break up the content and make it easier to scan. This is important, as users don’t read entire webpages — they scan them looking for information that’s relevant.

- Finally, the GPT typically reduced the wordcount of the page, making everything more concise and minimising the cognitive load (amount of total information to process).

So far, so good. If you look at the Excel screenshot above, you can see almost all the green on the GPT side. On paper, the GPT made the content better almost every time.

So is it time to announce the end of human content designers? Can I replace my team with a GPT to process all our content?

Well, not quite. Let’s look at the negatives…

What the GPT did badly

- Contentify often ended up oversimplifying and removing information, for example by rewriting a dense paragraph into a single bullet point. Reducing wordcount is good if you’re saying the same thing in a more concise way, but not if you’re removing whole chunks of information people might need to know (and we might have a statutory duty to provide).

- While the information architecture of the GPT’s edit was generally an improvement, the content sometimes felt overly formatted. The AI seemed intent on forcing information into a super-concise headings-and-bullets layout every time even when it wasn’t the most natural structure. This made things easier to scan, but sometimes harder to understand.

- The GPT also got the tone wrong in many places, for example “we’re excited to announce” and “stay tuned for updates”. This is too informal for government. There were also multiple instances where the phrasing was off (awkwardly worded), for example: “Serious violence includes using knives or guns badly.” Badly?

Removing information, inappropriate structure and the wrong tone of voice are not trivial issues that go away with a quick polish of the GPT text. In many cases it felt like Contentify had brilliantly re-worked the original content… in a way that suggested it didn’t understand it at all. That really matters in government, where even subtle differences can:

- change the meaning of the information

- undermine the authority of the messenger

- compromise the trust between service provider and user

Discussion

Now we’ve digested the results, what does it all mean?

AI isn’t a viable like-for-like replacement for human content designers — not even an improved version of my Contentify GPT.

Why? Because even just focusing on writing and editing content — ignoring other content design activities like research, design, testing, training and quality assurance — a GPT simply can’t replicate what a human content designer brings to the table.

This shouldn’t be surprising, as AI isn’t designed to replicate how a human brain actually works. Large Language Models (LLMs) are designed to simulate appropriate responses that a human might give to certain questions or prompts, based on massive amounts of training data. The way AI maps tokens and vectors and learns the relationship between concepts is fascinating, but it’s not the same as human thought processes.

Content designers understand how the world works in a way that my GPT can’t. They have:

- empathy

- natural semantic knowledge

- social, cultural and political awareness

- relationships with the people providing and using the service

These are all factors that define what the final content needs to be — and a lot of this may always exist outside of any LLM training data. That means only humans have the capacity to truly understand everything that’s needed for the end product.

So we still need humans. Not only does AI lack contextual understanding of content in the real world, it also lacks real-world agency. That matters because content design isn’t just an output — it’s a process that only people can really do. Ultimately, content design is about people, not technology.

So should we avoid using AI as content designers? Nope.

There isn’t a binary choice between rejecting AI and replacing people. Complementing human content designers with GPTs like Contentify can make us more effective and productive.

Let’s look at some ways it could be used effectively — and safely.

Recommendations

- First of all, let’s be clear: content designers should use AI tools like ChatGPT, Claude and Jasper. They can help us transform dense information into something normal people can actually read and understand. We should be embracing these tools, not neglecting them out of fear or ignorance.

- These tools can — and should — be used to re-write complex, technical passages of text into clearer, simpler and more accessible language. This can then be validated by tools like Hemingway, Grammarly and old-school techniques, like an actual human reading it and giving feedback.

- GPTs and other AI tools can also re-write entire webpages and documents in seconds, improving both the readability and structure so the content is much easier to scan. However, be very careful when doing this — a lot of manual checking will be required to ensure information or meaning hasn’t been lost in the AI’s quest for simplification. You’ll need to cross-reference against the original text to be confident statutory or essential details haven’t disappeared.

- The ideal scenario for using these tools may be configuring an AI instance (like a GPT) customised to your organisation’s content guidelines. However, even using out-of-the-box AI writing tools will make you more effective than ignoring them.

- Finally, this is boring but needs to be said: be careful about data protection when routinely feeding stuff into AI tools. If you’re generally working with information that’s going to end up in the public domain, you’ll probably be fine. But it might be worth your organisation having its own corporate policy for using generative AI.

About the author

Andrew Tipp is a lead content designer and digital UX professional. He works in local government for Suffolk County Council, where he manages a content design team. You can follow him on Medium and connect on LinkedIn.

Can AI replace human content designers? was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.

Leave a Reply