How we can build more useful AI interactions into our keyboards.

Last week, Microsoft announced a change so large that they could justify calling it the first significant change to the Windows keyboard in nearly three decades. Coming to hardware vendors in 2024 and (presumably) the next iteration of Surface devices, we’ll start to see a key dedicated to Microsoft’s Copilot, snuggled in next to our arrow keys. It’s prime real estate. It’s a shame we couldn’t find a better tenant.

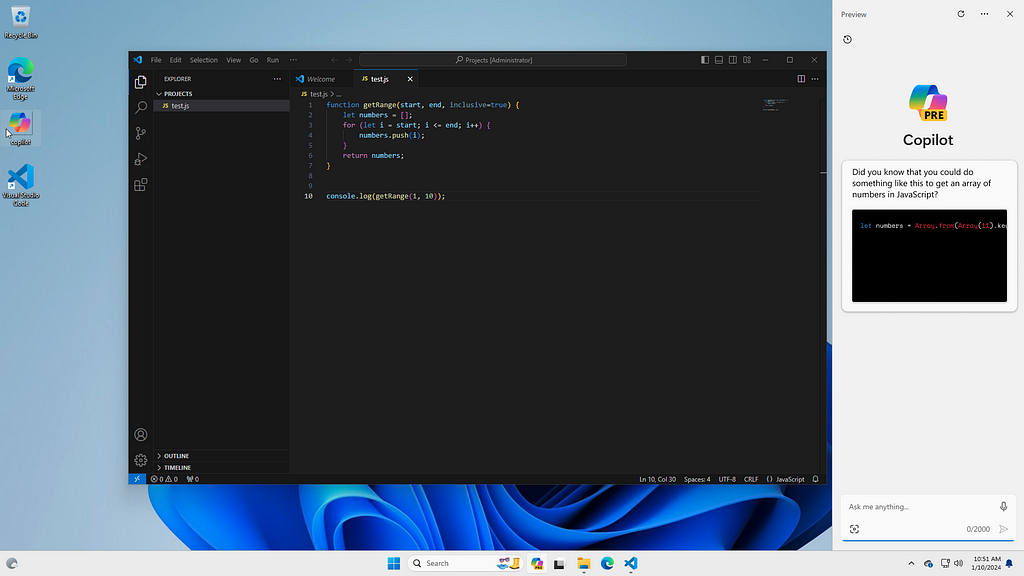

But we’ll get to that in a moment. The move signals Microsoft’s eagerness to bring Copilot front-and-center, capable of bearing down on any workload we happen to have present on our screens. This follows a flurry of development. After its initial integration into Bing search and the Edge browser, at the start of last year, Microsoft moved quickly to integrate OpenAI’s generative language models into every service under its umbrella, including Microsoft Office and its suite of tools. In September, the service came to the Windows Desktop. In November, the company rebranded each of these integrations under the more inclusive Copilot name.

If this isn’t all-in, I don’t know what is. I’ve seen hundreds of job listings on Microsoft’s career page, advertising some kind of involvement in the Copilot project. After lackluster adoption of its AI-powered Bing search, Microsoft seems eager to find something people want to use that will justify its exorbitant spending on OpenAI and its services. In its original advertisements for the Copilot rebrand, it seemed to conjure every potentially useful generative trick up its sleeve: summarizing web pages, writing messages, filling images, adjusting system settings, and so on. In a recent interview, Satya Nadella said that he uses it to help him draft memos.

While many of these features might be genuinely useful for certain workflows, they fundamentally miss the mark which is the original purpose of the personal computer: helping us to be better problem solvers. Back when GPT-3 was first released, Katelyn Donnelly employed the copilot analogy as well — but as a warning, not a branding guideline. Asking “should planes fly themselves”, the gist of the essay is that we run the risk of not developing certain important skills or, once attained, allowing them to atrophy. I won’t focus on deskilling, here, but it deserves emphasizing that the alleviation of drudgery should not be the final goal of our creativity in the LLM space and it isn’t always a desirable one.

In the parlance of an ancient Chinese proverb, the kind of tools currently offered in Microsoft’s copilot strategy are more akin to “giving the people fish.” Instead of encouraging workflows that encourage the development of skills to solve big problems, they instead release us from the drudgery of solving many small problems. Instead of just giving us fish, Microsoft should employ its (1) special relationship with OpenAI, (2) omnipresence in the desktop computing space, and (3) special relationship with our personal information, via Outlook, Onedrive, and Office, to use that shiny new Copilot button for a better purpose: teaching us to fish. But what does this look like, if not the current model? To answer that question, I’ll again turn to the analogy of the coach. For the sake of argument, let’s call it Coach 365.

Better Keyboards

The most important function of the personal computer is one that gets lost in a sea of content consumption. Our hypothetical Coach 365 shouldn’t be focused on creating content for us or retrieving someone else’s content for us to consume. Rather, it should be designed to prompt us towards connecting the content we have already created or curated. Instead of using my keyboard to prompt an AI assistant to write an email for me, I want it to give me feedback on the content I’m writing. Does the intent of the paragraph I just wrote happen to relate, strongly, to that piece by Katelyn Donnelly that I read a while back? That would make a great reference!

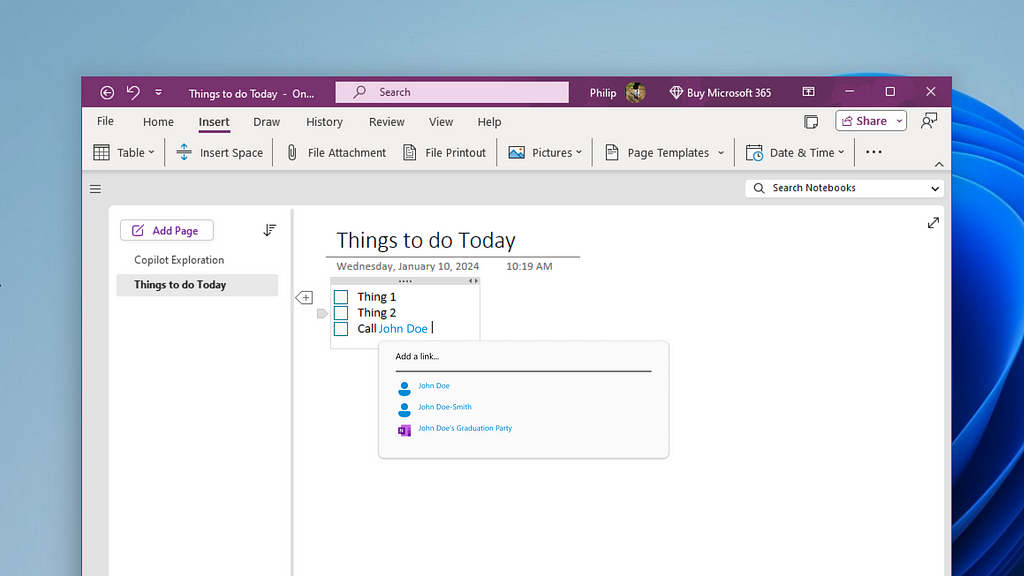

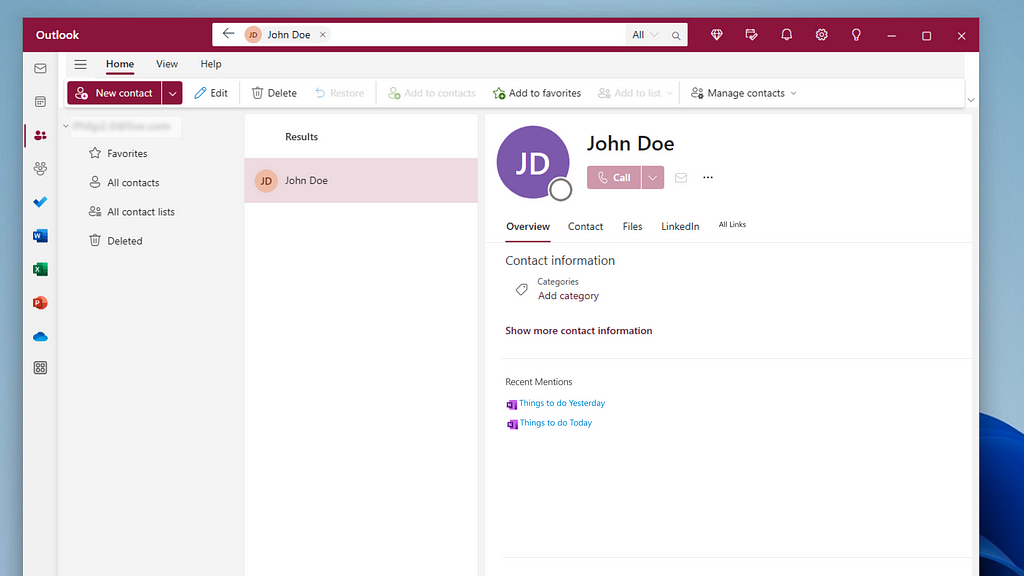

What if we explicitly mention a person or place in our research or notes? What if our handy-dandy keyboard prompted us to replace that static reference with a link? Here, I imagine a system similar to the now ubiquitous linked mention, used in Slack, Apple Messages, and other messaging systems. Therein, a name is recommended to be preceded with an @ sign, linking the message to the recipient. Instead of remaining in the context of the conversation, they would extend across the system. Notes that I make about friends or colleagues would organically grow as references to their contact cards. This would provide the backbone for contextualizing and solving problems related to the people who are in our lives.

As an example, we recently served chocolate-peanut-butter pie at a party, completely forgetting that my brother-in-law has a peanut allergy. What if, in the process of writing down a grocery list, linked to an event, which is in turn linked to the contacts attending, Coach 365 had surfaced an old note, reminding me that peanuts and brothers-in-law don’t go together?

This functionality goes well beyond contacts, potentially including any object in our technological life: photographs, geotags, notes, music recordings, and so forth. At this scale and capacity, our virtual assistants would become truly helpful. I like to think of it as a regression test, but for life. If we have already found a solution to a particular problem or recorded information vital to a solution, Coach 365 should help us to remember it.

Deleting Things, Not Creating Them

In an older essay, I wrote about the importance of keeping our personal web of content, as one does a garden. That includes pruning pieces of information that are no longer pertinent to the problems we are seeking to solve. While subjective, we should remember that less is still more.

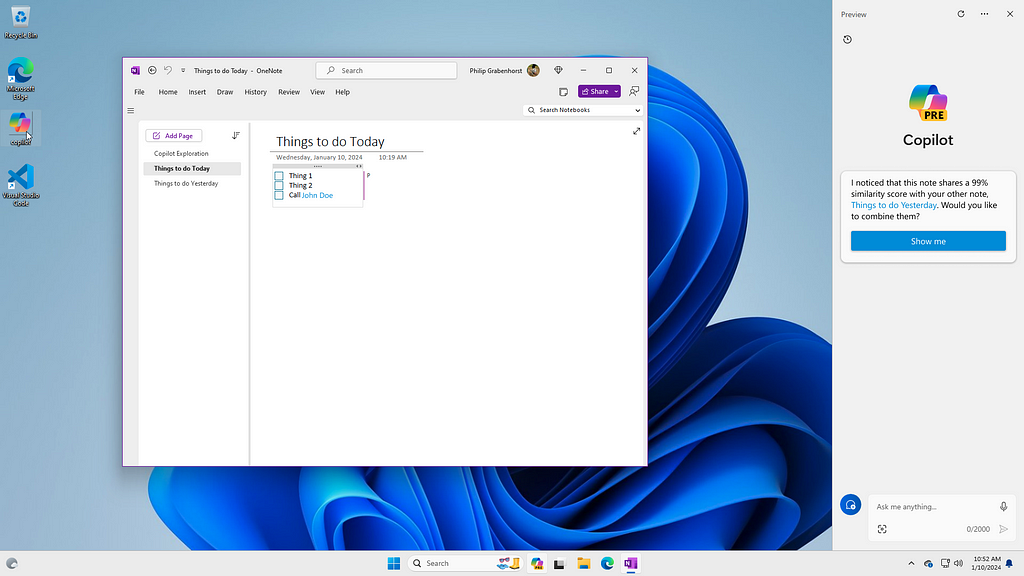

In addition to recommending connections amongst old information, our Coach 365 should also help us see when a new piece of content (notes, documents, what have you) is similar to older pieces of content. As we are in the act of writing, or soon thereafter, it could compare what we have just created to what we have created before. In doing so, we can avoid duplication. Beyond duplication, it provides an opportunity to assess whether the problem, concept, or piece we are creating is truly distinct or if it deserves incorporation into an existing structure. I’m imagining this as being especially useful in educational or research settings.

New Solutions to Old Problems

Now for a use-case that Microsoft is especially well-primed to take advantage of. If an artificially intelligent assistant knew (1) the context of our problem and (2) our goal, it would be poised to inform us about features or workflows that we were unaware of. What do we do when don’t know how to do something? We Google it. What about the problems we don’t Google? How often do we develop inefficiencies in our workflows, because we developed a methodology that keeps “kinda” working? So many times, I have witnessed computer users clicking through reams of emails with the intent to delete them, unaware of the “Cmd+A” shortcut, or searching for an undo button, completely oblivious of the “Cmd+Z” shortcut. Basically, it’s the kind of thing Clippy used to do (albeit poorly).

But we could do more. Here, I also have in mind problems of computer engineering. How many times do we, as software engineers, implement an algorithm or method inefficiently, simply because we’re unaware of a simple, more efficient solution? In the contexts of pair coding and technical interviews, we get a chance to unearth these holes in our understanding and have our intuitions challenged. But what if our Coach 365 proactively informed us of the more efficient solution? In this way, we would still benefit from the learning experiences of performing our own generative tasks. However, we would still be “alleviating drudgery” by becoming better, more efficient problem solvers ourselves. How’s that for a win/win?

This doesn’t have to stop at the edge of the technical practices. Instead of asking Coach 365 to draft a memo or a piece of marketing material, what if it took a competitive approach? It could follow our lines of argument, pointing out common logical pitfalls, places where our reasoning doesn’t hold up to superficial scrutiny, or we otherwise exhibit some clear bias. In doing so, it could even start to coach us out of insulation, ignorance, and complacency.

Copilot, Codependent

Meanwhile, back at the farm, Copilot seems to be offering us a hodgepodge of disconnected, supposedly time-saving conveniences. Apart from narrow use cases, such as the Edge browser or the current page of a Word Document, there is a serious disconnect between the content we are currently working with and the feedback Copilot provides. Even Microsoft’s front-page piece, the Copilot system prompt on Windows 11, seems devoid of context.

Simple contextual queries are met with confusion. “What’s on my screen right now?” Let me search the web for that, it says. “Who am I?” An interesting philosophical question, Copilot quips. “What’s on my calendar?” Let me search the web for that, too. Though placed in a window of prestige, Copilot seems to still believe that it is housed firmly in the Bing search browser, ready to help me search for and purchase self-help books in the pursuit of answering that burning “Who am I?” question. These are real interactions, by the way…

https://medium.com/media/99deba95e5bd128216139417e6f8d942/href

To the Copilot team’s credit, though, these problems are presently expensive to solve. It is by no means a small undertaking to index, compare, and generate text for so many, potentially unrelated interactions. Local device limitations, the speed of existing models, as well as other frictions, play a part. Nevertheless, Microsoft offers some services that begin to approach the level of self-referential connectivity I spoke of above — they’re just hidden behind the Microsoft Copilot 365 “For Enterprise” banner. Even then, the number of messages a user can make are limited. Worse though, it’s tailored in such a way that it just gives us more fish than the unpaid tier.

Copilot or Coach?

These limitations are quickly receding, though. In a paper, last month, researchers at Apple outlined a method by which we might be able to sidestep the memory limitations of personal devices, to locally run ever larger, more precise models. Even as the implementations of our machine learning models become more clever, our personal technologies are still getting faster and faster, hurtling toward the physical limits of computation. We will be able to equip language-generating models with more context, very soon.

So what will we do with them? Will we continue to ask our systems to give us fish, or will we learn how to fish? We need an artificially intelligent assistant that is aware of our personal data and our relationships with others. It should be equipped with mechanisms that allow it to retain our interactions with it, as well as use sentiment analysis to perform fine-tuning in response to the feedback we give it. It should help us to create new connections with and recommend new applications for the information we curate. It should be competitive, and not just passive, refining our existing workflows and helping us make the most of our computational systems. It should coach us out of complacency, too, helping us recognize where the solutions we generate are inefficient or insufficient.

If we get this right, imagine the problems we could solve. I believe that building Coach 365 is imperative — something that will equip each of us to build, create, and solve problems at levels of performance previously only achieved by a choice few. I believe that creating these systems will be a bootstrap of sorts, raising our collective problem-solving capacity such that it allows us to solve the greatest challenges facing humanity today and whatever the future has to throw at us. Let’s do it. Let’s build it. Let’s go fishing.

AI interactions: not a copilot, but a coach was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.

Leave a Reply