There is a lot we all don’t know.

The first draft of this article was completely different.

Originally, it was about how privacy is overrated. The ways we act super afraid of losing it, but while living in an increasingly digital world with more and more personalized content, it also feels impossible to keep. It’s just small scraps of information after all, why does it matter?

After talking to a friend, I knew that the first draft needed to be scrapped… and burned. But as much as I knew I was wrong, I still couldn’t shake this feeling that I didn’t understand what the hullabaloo was all about. Why were there companies dedicated to protecting search histories? Why did messages about cookies pop up on every site I visited? Why did every app I download have more privacy opt-in/out questions than ever before?

Why does everyone care so much?

And then I remembered that I’m Gen Z.

As someone who had their first cellphone in 3rd grade, I’ve never known the price of privacy.

In fact, I don’t know if I’ve ever actually had it. I’ve never been “off the grid” for a minute, let alone most of my life. Privacy was something my parents + grandparents cared about, but it never seemed to affect me.

When talking to my friends of a similar age about data collection, they feel the same way: “They just want to market to me better? Let ’em try” or “Who even knows what they are taking? I don’t need it”. Here we are, presented with so many options + toggles + questions that just make our lives easier — the price? A smidge of data here and there.

I’ve been uploading photos of myself onto some form of the internet since I was 12 years old. I’ve been reacting to photos of other people since around the same age. I grew up in a time where if random acquaintances on my social media didn’t know what I was doing every weekend, it was weird. So all of this talk about privacy… it never really existed to me.

A lot of a young person’s identity feels like it is tied to an online image – staying relevant and keeping people updated on what they are doing and who they are now, today. It feels inescapable to hold things just for you. To practice privacy can feel almost selfish. We are supposed to be always accessible (work, socially, relationships) – the green dot should be on. Our “status” is always known for transparency. There are so many nuances tied up in privacy for young people that we glance over in an effort to tackle other, bigger issues (screen time, dark UX, data leaks, etc.), but accessibility and relevance as social statuses are a prime examples of a social mindset that can put our data at risk.

It’s hard for kids who have never known privacy to feel entitled to it.

“If something is free, you’re the product.” — Richard Serra, 1973

We are living in a digital age accelerated by the access to data and what we can do with it. So many things have become faster and simpler because of the presence of data, computing power, and algorithms. As a UX Designer, it’s very clear to me how big of a role the access of data plays in our lives. But I also don’t think we fully understand the repercussions yet. Everything is so new, so shiny – it’s hard to see where our data could be manipulated long-term. Once we check the box, toggle on, click accept, it’s not really ours anymore. But whose is it? Where does it go?

“People in the U.S. still struggle to understand the nature and scope of the data collected about them, according to a recent survey by the Pew Research Center, and only 9% believe they have “a lot of control” over the data that is collected about them. Still, the vast majority, 74%, say it is very important to them to be in control of who can get that information.”

With the vast majority of data collection explanations being glossed over (or worse, purposefully confusing), I have seen a few examples of more care and time spent to explain where my data may end up.

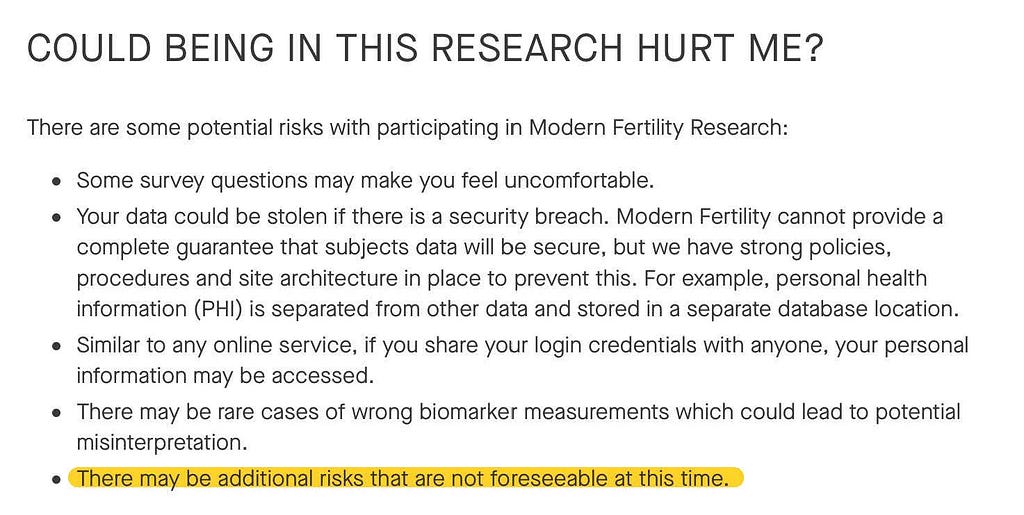

I recently signed up for Modern Fertility and was struck by the section around research participation. Normally, I do a lot of skipping over long paragraphs and information (hypocritical much?), but the large text and all caps caught my eye.

The specific note of “potential risks” gave me a pause – this is a word that is seldom used when explaining data collection. It is inherently a “scary” word, bringing an idea into the reader’s minds that does give them… pause. Not something most companies opt to do when in the throes of making money.

Because of this, I read through this section more carefully than normal and the last sentence really stuck out to me. “There may be additional risks that are not foreseeable at this time”… and they are so right. The honesty was a little startling and, though it didn’t provide much detail, I felt very seen. This is really where the crux of this article lies – there may be unknown future risks that should still be given importance so that we can hold that knowledge in the back of our minds, always.

Apple’s privacy initiative released a huge change to iPhones in 2021. Apple set up its own notifications to ask users whether or not they want their data to be tracked from specific apps.

Instead of finding privacy settings hidden in your preferences section, it’s the first thing you see when opening the app. It has created a huge stir in the field of targeted ads and marketing revenue, but it more importantly creates a… pause. The pause needed for a user to connect what they decide to do and it’s potential effects. I’m certainly not saying that Apple’s new initiative is perfect, but it’s a step in an important direction.

In the end, we are all still only one “toggle on” away from privacy being taken from our hands. Taken from us for all future uses that might only get spelled out in a sporadic terms + conditions email from a company we forgot we signed up with.

People should always have the freedom to choose for themselves. Period. The questions I’m starting to ask are — How do we design for generations that don’t value privacy? Instead of taking advantage of ignorance, how do we slow down and help complicated systems become tangible?

I hope these are questions that we as a society start asking and we as a community start answering. There is a lot we all don’t know.

As this is an opinion piece, I’d love to hear your thoughts! Disagree with me in the comments or bring some new information to light that I may have missed. Thanks for reading!

Interested in reading more around digital privacy? Here’s a few articles I’ve collected that keep me thinking:

- Privacy in the Metaverse might be Impossible (new research study)

- Bite into privacy: the future of personalised UX in a cookieless web

- TikTok’s unprecedented ability to engineer the “Consent of the Masses”

- Personalized experiences: When do they become behaviour change?

- ID

Understanding the price of privacy was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.

Leave a Reply