How to manage the inherent uncertainty of AI.

Trust makes relationships go round — whether it is between people, businesses, or the products we rely on. It’s built on a mix of qualities like consistency, reliability, and integrity. When any one of these breaks, the relationship cracks with it. A friend who disappears for a year, a car that won’t start half the time, a teammate who never pulls their weight — trust thins out, and we start looking for alternatives.

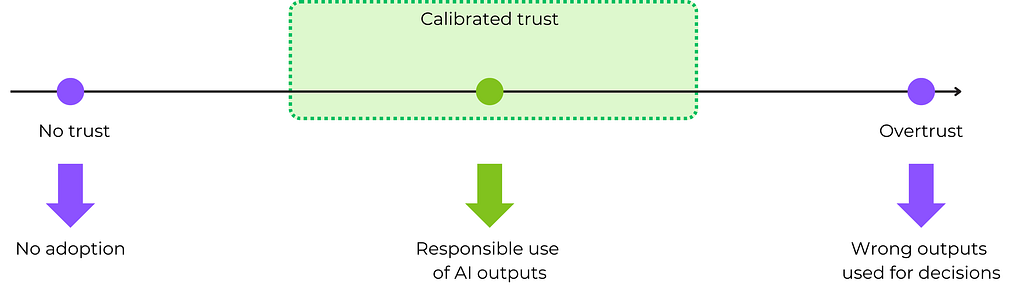

AI is a tough nut to crack when it comes to trust. By nature, it’s probabilistic, uncertain, and makes mistakes. Earning user trust takes real effort, and once you’ve earned it, you often need to dial it back. In AI, overtrust is dangerous. If users accept AI outputs by default, errors will snowball into bad decisions with real-world consequences [1][2]. Your users should become responsible collaborators who calibrate their trust by questioning, adjusting, and taking ownership of how they use the system (figure 1).

So, how do you build appropriate trust into your AI system? When working with AI teams, I often see trust reduced to model accuracy. “Users don’t trust the system because it makes mistakes.” The assumption is that trust is a technical problem, and engineers or data scientists need to “fix” it.

But that’s only part of the picture. In reality, trust is primarily built through how users understand and experience your product — how well it understands their needs, communicates its value, and supports them in the moment. This article focuses on the user-facing dimensions of trust, namely the addressed use case, value creation, and user experience.

We’ll explore practical techniques and design patterns for building and calibrating trust. I will illustrate these with insights from a real-world project where we used AI to support R&D teams at a major automotive manufacturer.

Use case: Optimize for relevance and impact

Choosing the right use case is the first strategic step towards trust. Today, AI has become fairly accessible, and we see generic AI features like chat pop up in every other product. Often, they feel generic, awkward, and disconnected from real user needs. If you don’t want to fall into this “me-too” bucket, you need to show users that you understand them and that your AI is there to help, not distract.

At Anacode, we recently partnered with the R&D division of a major automotive manufacturer. Our goal was to track trends and emerging technologies to support the company’s innovation efforts. We kicked things off with a motivated group of internal AI champions, but soon, we ran into skepticism from the wider team. These were seasoned engineers and researchers — people who pride themselves on knowing their domain inside and out. The last thing they wanted was a black box spitting out unsolicited advice. But a tool that subtly enhances their expertise, boosts their outcomes, and improves their standing in the company? For sure, that would be interesting.

Solving the right problem

The problem of our users wasn’t a lack of intelligence or insight — it was signal overload. Every week, new patents, startups, funding rounds, and papers landed in their inboxes and newsfeeds. They needed help seeing what actually mattered, so we framed the system as a radar that could spot early signals, surface momentum, and point experts toward what’s worth investigating. This directly addressed their pain points and brought initial buy-in.

More generally, our use case needs to check two boxes:

- It must be a problem where AI can shine and create significant value (cf. chapter 2 of my book The Art of AI Product Development on discovering the best AI opportunities).

- It must be perceived as a problem by the users. When AI steps into a space users are frustrated by — time-consuming, noisy, repetitive tasks — it’s appreciated. It supports users and clears space to focus on the parts they care about, such as judgment, creativity, and strategy.

Seamlessly integrating into existing workflows

Your AI should support your users, rather than disrupting their workflows and adding cognitive burden. In our case, the R&D teams already had well-worn (though not always efficient) processes and artefacts — briefs, reports, technical review decks, etc. A tool that introduced friction wouldn’t stick.

Here’s a straightforward way to plan for seamless integration:

- Start by mapping the existing, “human” process.

- Pinpoint where AI can add real value — whether by automating grunt work, surfacing hidden insights, or speeding up analysis.

- Build a tailored AI journey around these moments.

- Plan to deliver outputs in the formats users already trust.

In our case, the obvious move was to start with the first step in the process, namely technology landscape monitoring, where large quantities of data need to be combined, structured, and distilled into insights (figure 3).

Align impact with trust

The higher the stakes of your AI, the more carefully you need to build and support trust. A bad movie recommendation on Netflix is harmless. But a flawed R&D insight can lead to a negative impact down the road, especially if it is formulated in the upbeat, self-confident language of a modern LLM. Initially, our system monitored and quantified trends. That was a relatively safe role, which supported early adoption. But as we ventured into evaluating and recommending specific innovations, skepticism increased. Experts questioned how AI derived its suggestions and whether it understood real industry challenges.

To mitigate this, we started with low-key features like highlighting competitor innovations rather than advising on the company’s own R&D activities. Over time, the AI got more competent, and we expanded its scope. Framing is also important — by talking about innovation ideas rather than recommendations, we made clear that users were still in the driving seat. The expert responsibility of assessing and refining the ideas was still on their side.

Your trust capital is build and reinforced in a virtuous cycle. As users build trust into small features, they will be more likely to accept “more AI” over time [8]. On the other hand, as your AI system keeps improving, it will also be more likely to live up to growing expectations as you increase its scope.

Value

When your product gets out, it should hook users with immediate, tangible value. That initial win opens the door for engagement. But trust doesn’t build overnight — you need to keep delivering and raising the bar. As users rely more on your system, value must compound. This ongoing progress is what helps offset AI’s inherent imperfections — its uncertainty, occasional errors, and evolving boundaries.

Provide a fast track to measurable value

AI can create value along different dimensions, such as productivity, process improvement, and emotional benefits (see this post for more details). In my experience, starting with simple productivity and efficiency benefits is the best way to get your foot in the door. Find ways to save time and cost for your users. This is measurable, tangible, and often humanly appreciated. Once you have built an initial layer of trust, you can expand to more subtle benefits.

In our example, users struggled with monitoring large quantities of data over time. Thus, we started by providing verified and relevant bits of insights, letting users want more. These were concise and factual summaries of relevant trends, for example: “Solid-state battery patents are up 40% year-over-year. Toyota and Hyundai lead the activity. Capital is shifting toward next-gen anode materials.” Backed by relevant data, this insight quickly made it into internal reports and attracted more users. Not because it was new information — everyone knew solid-state was heating up — but because it was framed cleanly and reliably and saved hours of hunting and verification.

That’s where AI gains its right to play: cost or time savings that are apparent in existing workflows and decisions. By contrast, if experts have to engage in a long discovery to see the value of your AI tool, they’ll likely drop the ball (”I could as well search for this data myself”).

Demonstrate integrity with realistic communication

This principle is simple, but hard to stick to. In a world of marketing superlatives and inflated promises, being honest can feel scary. But in the long run, it pays off. Avoid the trap of overpromising, whether it is about the accuracy, scope, or value of your AI. If the product doesn’t hold up, users will get frustrated and disengage (“We’ve seen this before — big claims, no follow-through.”)

In the UX section, you will also learn how to communicate limitations and uncertainty inside the product using design patterns like confidence scores and oversight prompts.

Inject domain expertise into your system

Especially in B2B, trust crumbles fast when AI doesn’t “get” its domain. If your system talks in generic terms or misses the nuance experts expect, forcing them to edit the outputs constantly, they’ll walk away. For the latest, this will happen when they think something along the lines of “I’m spending as much time fixing this as I would doing it from scratch”.

In our case, early versions of the system flagged “autonomous vehicle software” as a key trend. This was correct, but it felt shallow and obvious. After fine-tuning for industry-specific relevance, the system started surfacing more granular signals — like anode-free lithium battery R&D, or the use of self-supervised learning in in-cabin driver monitoring systems. It got proficient at using the jargon of its users, and the insights could be directly reused.

My article Injecting domain expertise into your AI system provides a comprehensive overview of the methods to customize your AI system for specific domains. There is a learning curve to this — your AI doesn’t need to act like a domain expert from the beginning. Often, giving users an opportunity to enrich your system with their domain knowledge will not only improve performance but also create a sense of ownership and deepen engagement. This leads us to the next trust-building technique, namely the visible compounding of value through continuous improvement.

Commit to continuous improvement

One of the best practices of successful AI development is to launch early and collect relevant data and feedback for improvement. Releasing an imperfect system can be scary — but in the end, AI is never perfect, so get used to the idea. In the first three months, our system surfaced several useful signals, but many insights were still not to the point. While this was enough to demonstrate initial value, we were clearly in for a race with time. Once users use your AI more frequently, they develop a relationship of their own. Their expectations grow as they become more proficient with the tool and want to rely on it in their daily work.

You need to catch this momentum and continuously optimise your system. Fortunately, in most cases, you can create feedback mechanisms that not only point you to the shortcomings of your system, but also allow to collect meaningful training data to improve your system over time. The section on “Feedback and learning” will dive into different ways to collect feedback from your users.

The use case you address and the value you provide are at the core of your AI product strategy and positioning. They motivate your users to buy and use your application. But the trust muscle is built over time. On a day-to-day basis, your users will be interacting with your AI via the user interface, and this is where the real fun begins.

User experience

There are plenty of design patterns you can use to help users build and calibrate their trust. These relate to transparency, control, and feedback collection. At the end, they boil down to mitigating the uncertainty and the risks of errors made by the AI.

Transparency: Aligning user expectations

Users build mental models of the products they interact with [7]. Trust will break if it turns out that these models are not aligned with reality. Now, in AI, a lot of the action happens “under the hood,” away from the eyes of your users. An AI app that relies on ChatGPT will behave differently than one that uses a deeply customised LLM — though the interaction will look the same on the surface. To avoid mismatched expectations, you need to crack open the black box of your system and explain its relevant workings to your users. Here are some design patterns to achieve this:

- In-context explanations: Help users understand how an output was generated, right where they see it. For example, a system tracking emerging technologies might explain a trend by breaking down its inputs — like recent patent spikes, venture capital activity, and competitor filings. In graphical interfaces, this can happen with interactive elements like tooltips. In conversational interfaces, you provide the explanation directly in the conversational flow.

- Footprints / chains-of-thought let users trace how the AI reached its conclusion. In our case, clicking on a suggested innovation revealed its source trail: the documents, events, and filters that led to its prioritization.

- Caveats and disclaimers can be used to highlight known limitations of an output or dataset. If data coverage is sparse, or signals conflict, clearly state that early so users don’t make decisions based on flawed assumptions.

- Citations: Link outputs to source data — whether those are documents, reports, or APIs — so users can verify the AI output themselves.

- Everboarding and guidance: Keep users informed as the system evolves. When features or logic change, provide lightweight tooltips or embedded guidance to explain what’s different and why it matters.

- Verification-focused explanations: Instead of just explaining how the output was formed, help users evaluate its reliability. Include self-critiques (“This signal may be inflated by cross-market overlap”) or alternative interpretations to activate user judgment.

When thinking about transparency, pay special attention to communicating the uncertainty of your AI. This will help users calibrate their trust and spot errors. Here are some techniques:

- Confidence scores: Use simple, visible indicators — like percentages or a low/moderate/high scale — to signal how much confidence the system has in a result.

- For text content, you can use both visual and linguistic indicators of uncertainty. For example, you can visually highlight phrases, numbers, or facts that need validation.

- You can also prompt the generating LLM to use uncertain language to hedge questionable content (“This may suggest…,” “Data is inconclusive…,” “Further validation recommended.”, etc.)

AI explanations are a living topic — they will evolve quickly as you start getting feedback, questions, and complaints from your users. I prepared a cheatsheet that you can have at hand when updating your explanations, which you can download here.

Control: Putting users into the driving seat

Transparent outputs have little value if users cannot act on the additional information. To establish collaboration between users and AI, you need to give them control and a sense of responsibility. Here are some design patterns that can be applied before, during, and after the AI’s job:

- Pre-task setup: Let users configure the scope of AI tasks — e.g., data sources, timeframes, entity filters — before launching analysis. This increases relevance and avoids generic results.

- Adjustable signal weighting: Let users decide how much weight to give different sources or criteria. In trend monitoring, one user might prioritize venture activity; another, academic citations. Explain the impact of the signals.

- Inline editing and actions: Make parts of the output editable or replaceable in place. Users should be able to refine vague terms or tweak filters without rerunning the entire task.

- Emergency stop (abort generation): Allow users to halt generation processes if they notice early errors or misalignment. As most of us have experienced when using ChatGPT&Co., this can save a lot of time when the user sees that the AI got off-track.

- Intentional friction: Integrate friction to activate critical thinking. Challenge users with questions like: “What assumptions does this result rely on?” or “Could this be explained by noise?” These prompts for critical thinking are also called cognitive forcing functions (CFFs; cf. [5]). They help calibrate reliance, especially early in the trust journey.

Error management: Mitigate the risks of AI mistakes

Even if your engineers optimize AI performance to death, mistakes will still slip through to your users. You need to turn your users into collaborators who help you catch these failures and turn them into learning opportunities. Here are some best practices to keep them aware and alert:

- Highlight error potentials during onboarding: Set realistic expectations about model performance. “About 1 in 10 results may need review” is better than pretending the system is flawless. Communicate the strengths and weaknesses of the system, and introduce users to both strong and flawed outputs. This shapes realistic expectations and shows how collaboration with AI works in practice.

- Inline feedback mechanisms: Enable users to flag issues directly where they occur — misclassifications, false positives, outdated data.

- Immediate acknowledgment and recovery: After feedback is submitted, respond visibly and offer a fix. “Thanks for flagging — would you like to regenerate the output without that item?” Communicate the impact of the user’s feedback as precisely as possible: “Your feedback will be integrated in the next release at the end of the month.” → encourage user to build feedback muscle

Feedback and learning: Let the system grow with the user

Trust deepens when users feel they have a voice. If they know they can shape the system — and see that their input drives real improvements — they stop seeing the AI as a black box and start treating it as a partner. That’s how you turn passive users into co-creators.

Start by capturing implicit feedback: Where do users zoom in? What do they edit or delete? What do they consistently ignore? These behavioral signals are gold for tuning relevance. Then, layer in explicit feedback mechanisms:

- Binary feedback: A simple thumbs up/down gives you an instant signal on output quality. It’s low-effort and useful at scale.

- Free-text inputs: Especially useful early on, when you’re still learning how your system is missing the mark. It requires more effort to parse, but chances are that the insights are worth it.

- Structured feedback: As your system matures and common failure patterns emerge, offer users predefined categories to speed up feedback and reduce ambiguity.

Integrating these patterns is technically easy, but without clear incentives, many busy users might ignore them. Here are some tips to pull your users into the feedback loop:

- Communicate the impact of the feedback clearly. This will show users that it helps improve the system, which is a reward in itself.

- Consider constructive extrinsic rewards. One advanced mechanism we used was unlocking deeper customization and control for users who consistently engage with the system. This not only incentivizes them to provide feedback, but also supports power users without overwhelming novices.

Summary

Trust builds gradually, across every interaction, every insight delivered (or missed), every decision your users make with your AI by their side. Time is important — your AI will (hopefully) improve, producing more relevant insights and fewer errors. Your users will also change — they’ll become more skilled, more reliant, and more demanding. If your system doesn’t grow with them, confidence and trust can erode.

That’s why trust in AI requires careful planning and a layered strategy:

- Use case and value communication create the strategic foundation: Is this solving a real problem? Can users clearly see the benefit?

- UX handles the day-to-day work of trust: transparency, control, and feedback loops shape how users build their mental model of your product and calibrate trust in real time.

AI is tricky by design — uncertainty and errors are part of the package. But if you’re intentional and build your product for clarity and collaboration, trust can become your strongest asset and differentiator. It will fuel adoption, invite feedback, and turn cautious users into advocates.

References and further readings

- Howard, A. (2024). In AI We Trust — Too Much? MIT Sloan Management Review. Retrieved from https://sloanreview.mit.edu/article/in-ai-we-trust-too-much/

- Sponheim, C. (2024). When Should We Trust AI? Magic-8-Ball Thinking. Nielsen Norman Group. Retrieved from https://www.nngroup.com/articles/ai-magic-8-ball/

- McKinsey & Company (2023). Building AI trust: The key role of explainability. QuantumBlack, AI by McKinsey. https://www.mckinsey.com/capabilities/quantumblack/our-insights/building-ai-trust-the-key-role-of-explainability

- Microsoft Aether Working Group (2022). Overreliance on AI: Literature Review. Microsoft Research. https://www.microsoft.com/en-us/research/wp-content/uploads/2022/06/Aether-Overreliance-on-AI-Review-Final-6.21.22.pdf

- Microsoft Research (2025). Appropriate Reliance: Lessons Learned from Deploying AI Systems. https://www.microsoft.com/en-us/research/wp-content/uploads/2025/03/Appropriate-Reliance-Lessons-Learned-Published-2025-3-3.pdf

- Microsoft Learn (n.d.). Overreliance on AI — Guidance for Product Teams. Microsoft AI Playbook. https://learn.microsoft.com/en-us/ai/playbook/technology-guidance/overreliance-on-ai/overreliance-on-ai

- Google PAIR (2019). People + AI Guidebook: Designing human-centered AI products. https://pair.withgoogle.com/guidebook/

- Kniuksta, D., & Vedel, S. (2023). Deep dive: Engineering artificial intelligence for trust. Mind the Product. Retrieved from https://www.mindtheproduct.com/deep-dive-engineering-artificial-intelligence-for-trust/

- DNV (2023). Building trust in AI: Creating responsible AI systems through digital assurance. DNV — Future of Digital Assurance. https://www.dnv.com/research/future-of-digital-assurance/building-trust-in-ai/

- Janna Lipenkova (2025). The Art of AI Product Development, chapter 10. Manning Publications.

- Anacode GmbH (2025). Cheatsheet: Explaining your AI systems.

Note: Unless otherwise noted, all images are by the author.

Building and calibrating trust in AI was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.

Leave a Reply